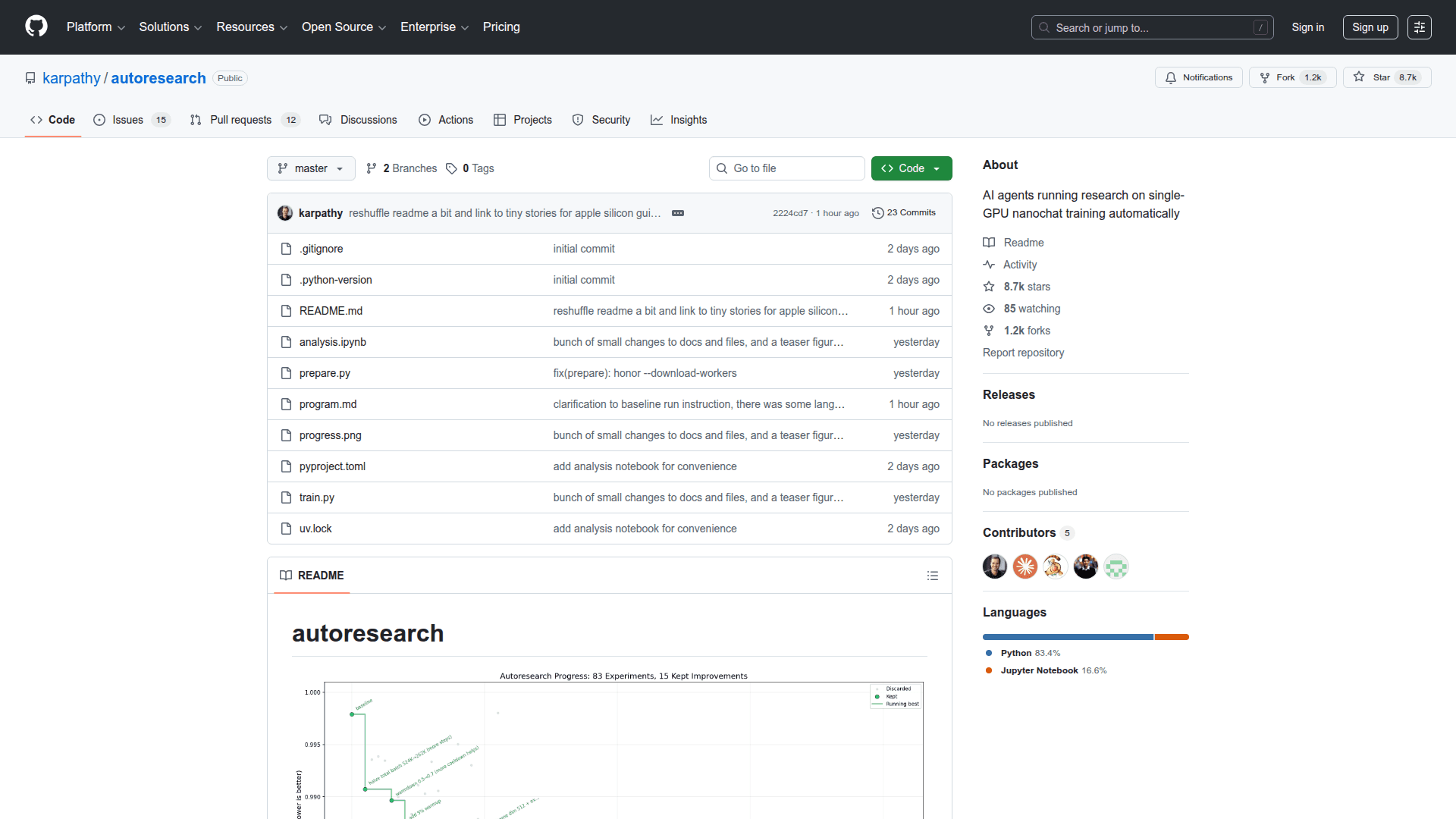

autoresearch

Autonomous AI agent that iteratively experiments on single-GPU LLM training code overnight while you sleep.

At a Glance

About autoresearch

autoresearch is an open-source framework that puts an AI agent in charge of running machine learning experiments autonomously. You point a coding agent (Claude, Codex, or similar) at a small but real LLM training setup, and the agent modifies the training code, runs a 5-minute training experiment, checks whether the validation metric improved, keeps or discards the change, and repeats—producing a log of experiments and (hopefully) a better model by morning.

The training base is a simplified single-GPU implementation of nanochat (a GPT-style model). The human's role shifts from writing Python to writing program.md—a Markdown file that acts as the agent's standing instructions and research strategy. The agent exclusively edits train.py, which contains the full model architecture, optimizer, and training loop.

- Fixed Time Budget - Every experiment runs for exactly 5 wall-clock minutes, making results directly comparable regardless of architecture or hyperparameter changes

- Single Metric - Validation bits-per-byte (val_bpb) is the objective; lower is better and vocab-size-independent, so architectural changes are fairly compared

- Single File Editing - The agent only modifies

train.py, keeping diffs small and reviewable - program.md Interface - Human researchers guide the agent by editing a Markdown instruction file rather than Python code

- Self-Contained - No distributed training, no complex configs; one GPU, one file, one metric

- Autonomous Loop - Run ~12 experiments per hour; ~100 experiments while you sleep

- MIT Licensed - Fully open source with no restrictions

To get started, clone the repository, install dependencies via uv, run prepare.py once to download data and train a BPE tokenizer, then spin up your AI coding agent pointed at program.md.

Community Discussions

Be the first to start a conversation about autoresearch

Share your experience with autoresearch, ask questions, or help others learn from your insights.

Pricing

Open Source

Fully open source under MIT license. No cost to use, modify, or distribute.

- Full source code access

- Autonomous AI research loop

- MIT license

Capabilities

Key Features

- Autonomous AI agent loop: modify → train → evaluate → keep or discard

- Fixed 5-minute wall-clock training budget per experiment

- Validation bits-per-byte (val_bpb) as the single comparable metric

- Agent-editable train.py with full GPT model, Muon+AdamW optimizer, and training loop

- Human-editable program.md for setting agent research strategy

- ~100 experiments possible in a single overnight run

- Single NVIDIA GPU support (tested on H100)

- MIT license — fully open source

- Built on nanochat, a minimal GPT training codebase