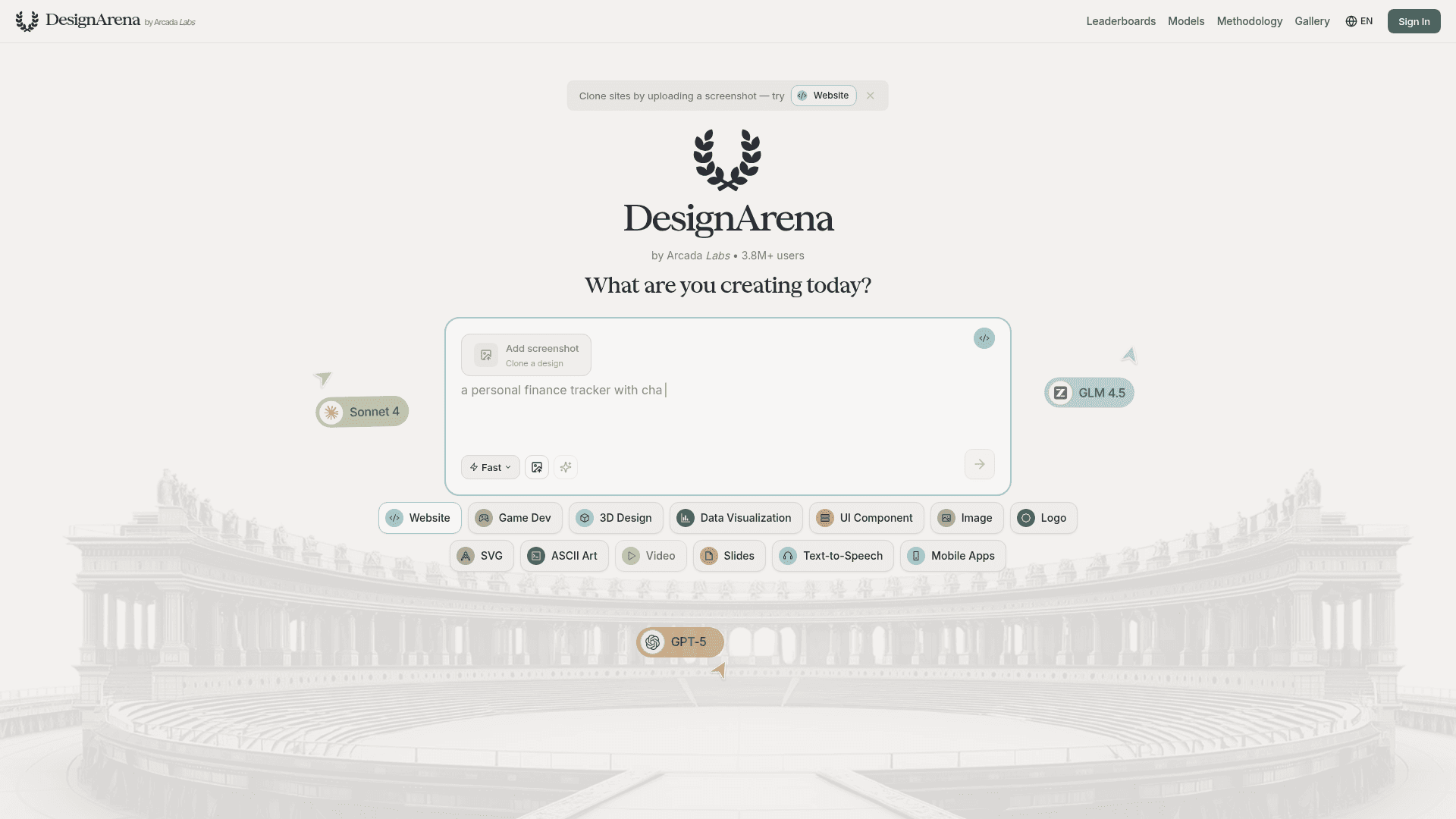

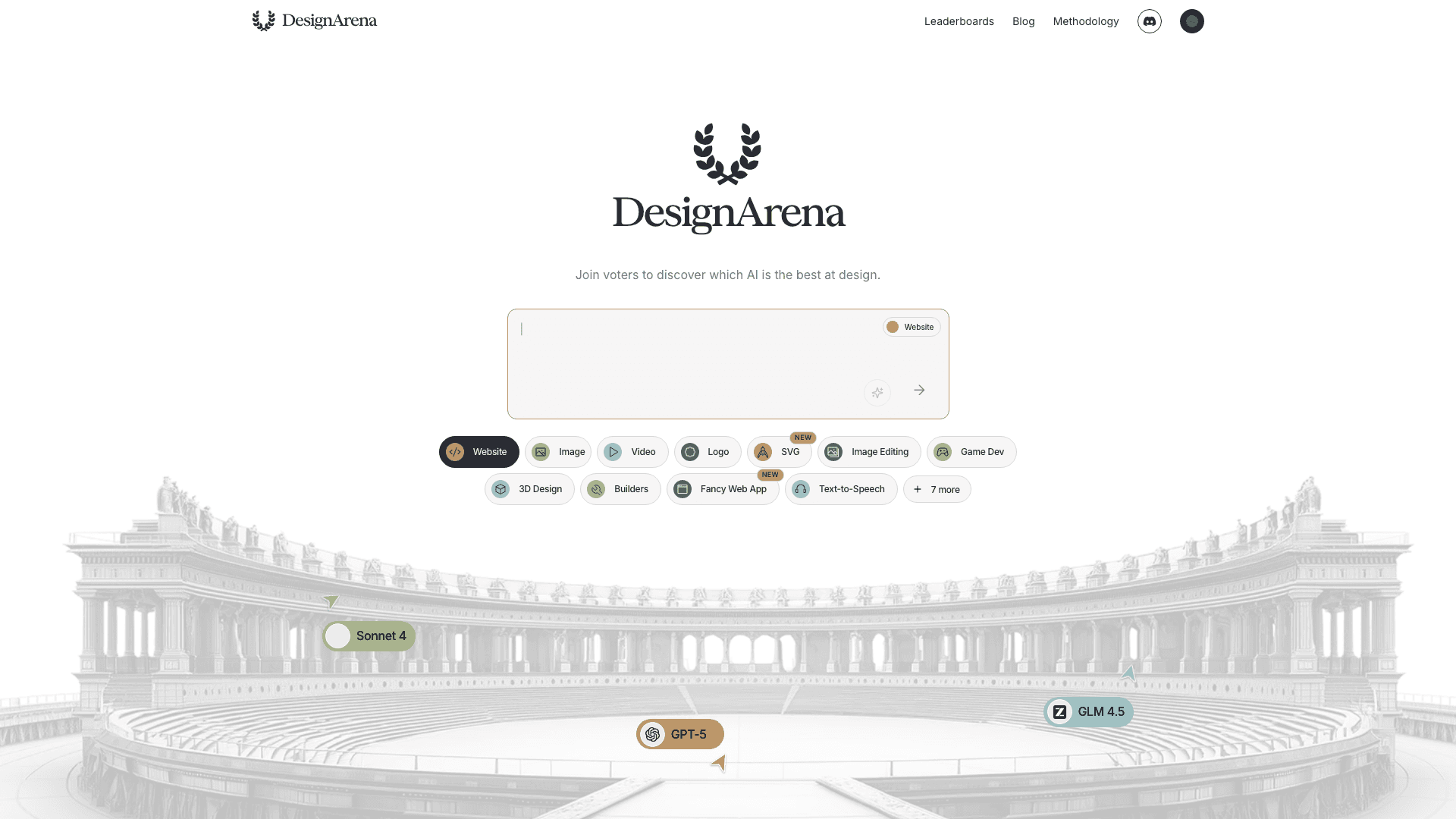

Design Arena

Crowdsourced benchmark for AI‑generated design. Users vote on head‑to‑head outputs (web UI, images, video, audio) to rank models by human preference.

At a Glance

About Design Arena

Design Arena is the world's first crowdsourced benchmark platform dedicated to evaluating AI-generated design quality through real human preference testing. Built by Y Combinator S25 company Arcada Labs, it uses head-to-head comparisons where users vote on anonymous AI outputs across categories like websites, UI components, images, video, audio, logos, and data visualizations. The platform applies a Bradley-Terry rating system (Elo-style scoring) to aggregate thousands of votes into transparent public leaderboards that reveal which AI models produce designs people actually prefer.

How Design Arena Works

Design Arena presents two AI-generated outputs side-by-side from identical prompts. Users vote on which design they prefer, and these votes feed into an Elo-based ranking system that updates in real time. Bot protection via captcha ensures only human preferences count toward the benchmark.

Design Arena Categories

| Arena | What It Benchmarks | Example Tools |

|---|---|---|

| Model Arena | LLMs generating single-file HTML/CSS/JS code | OpenAI, Anthropic, Google Gemini, xAI, DeepSeek, Mistral |

| Builder Arena | Vibe-coding tools deploying complete web apps | Lovable, Bolt, v0, Replit, Cursor, Devin, Firebase Studio |

| Mobile Builder Arena | Mobile app generators | Rork, Blink.new |

| Image Arena | Image diffusion models | Midjourney, Black Forest Labs, Ideogram, Recraft |

| Video Arena | Video generation models | Luma Labs, Kling AI, Pika, Midjourney |

| Slides Arena | Presentation generators | SlidesGPT, Gamma |

Design Arena Model Coverage

Design Arena tracks 50+ LLM models, 12+ image models, 4+ video models, and 22+ audio models across its specialized arenas. Each arena uses category-specific prompts and evaluation criteria to produce fair comparisons within its domain.

Design Arena Features

- Elo-Based Rankings - Uses the Bradley-Terry model to calculate win rates and Elo scores from pairwise comparisons, providing statistically robust rankings

- Micro Evals - Automated code evaluations that test agent-generated apps for specific technical criteria like Next.js routing, Tailwind implementation, and Vercel deployment

- Transparent Methodology - Publishes all system prompts, evaluation methods, and ranking formulas openly so users can verify rankings reflect genuine community preferences

- Private Enterprise Evaluations - Offers companies secure version-over-version testing to track model improvements and accelerate R&D cycles with human preference data

Getting Started with Design Arena

Visit the arena to vote on design matchups and explore leaderboards to discover which AI models excel at specific design tasks.

Community Discussions

Be the first to start a conversation about Design Arena

Share your experience with Design Arena, ask questions, or help others learn from your insights.

Pricing

Free

Full public access to Design Arena

- Head-to-head voting on AI designs

- Access to all arena categories

- Public leaderboard access

- Create and view tournaments

- Real-time Elo rankings

Enterprise

Private evaluations for AI companies and teams

- Private benchmark environments

- Version-over-version model testing

- Proprietary evaluation workflows

- Human preference data for R&D

- Custom prompt sets

- Analytics and performance tracking

- API access for workflow integration

Capabilities

Key Features

- Head‑to‑head voting UI for AI‑generated outputs

- Public leaderboards ranked by live community votes

- Model comparison studio that does not affect rankings

- Human vs AI challenge mode (“Humanity”)

- Published methodology and system prompts

- Captcha/bot‑resistance for human‑only ratings

- Private evaluations for enterprises

Integrations

Demo Video