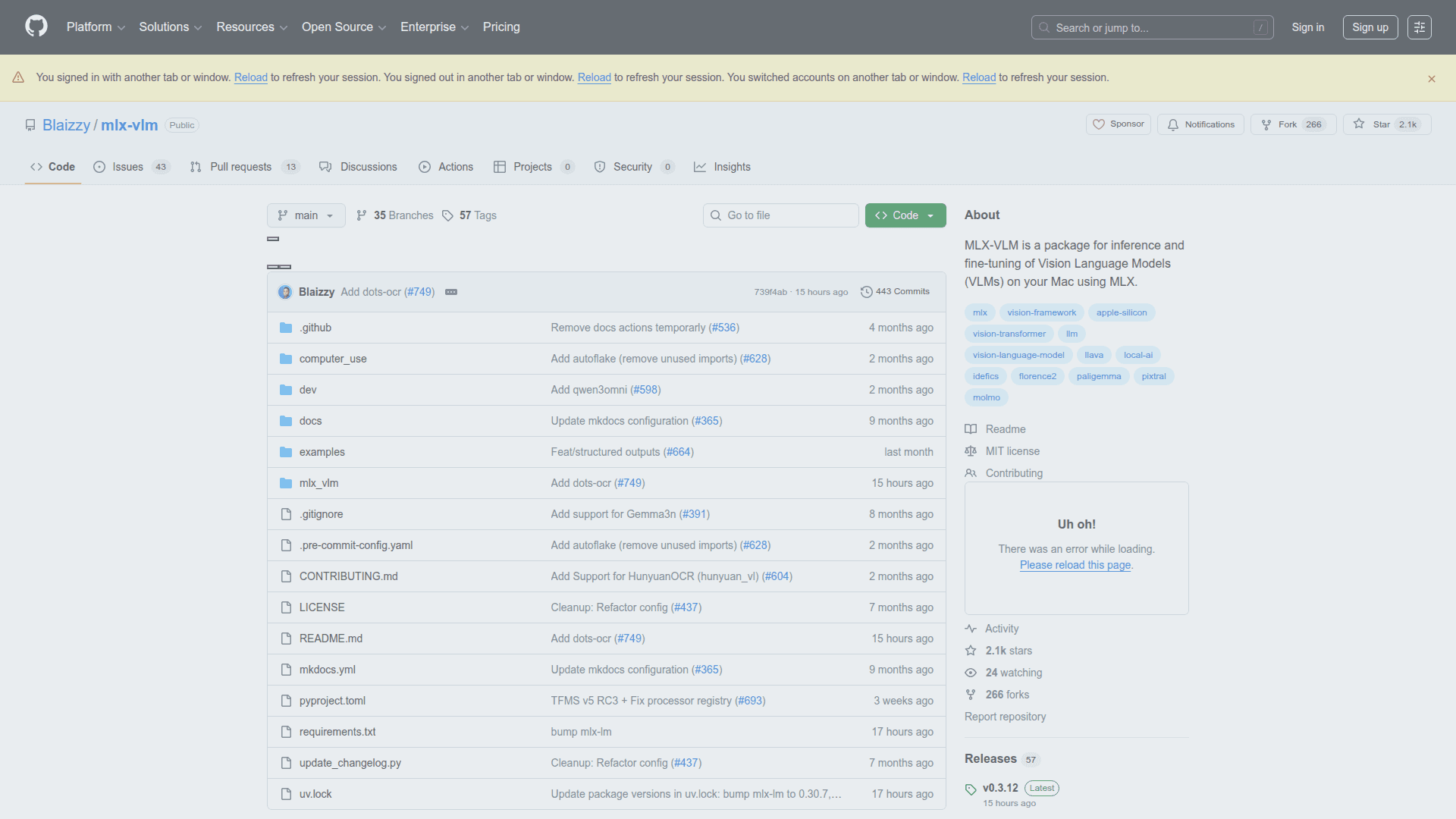

MLX-VLM

A Python library for running Vision Language Models on Apple Silicon using the MLX framework.

At a Glance

Free and open source library

Engagement

Available On

Listed Feb 2026

About MLX-VLM

MLX-VLM is a Python library designed for running Vision Language Models (VLMs) locally on Apple Silicon devices using Apple's MLX framework. It enables developers and researchers to leverage powerful multimodal AI capabilities directly on Mac hardware without requiring cloud services or external APIs.

The library provides a streamlined interface for working with various vision-language models, making it easy to perform tasks like image understanding, visual question answering, and multimodal content generation. MLX-VLM takes advantage of Apple Silicon's unified memory architecture and the MLX framework's optimizations to deliver efficient inference performance.

-

Local Inference - Run vision language models entirely on your Mac without sending data to external servers, ensuring privacy and reducing latency for real-time applications.

-

Apple Silicon Optimization - Built specifically for the MLX framework, the library leverages Apple Silicon's unified memory and GPU capabilities for efficient model execution on M1, M2, and M3 chips.

-

Multiple Model Support - Compatible with various vision-language model architectures, allowing users to choose the best model for their specific use case and hardware constraints.

-

Python Integration - Provides a clean Python API that integrates seamlessly with existing machine learning workflows and data science pipelines.

-

Easy Installation - Install via pip with

pip install mlx-vlmand start running vision language models with minimal configuration required. -

Open Source - Fully open source under a permissive license, enabling community contributions, customizations, and transparency in how models are executed.

To get started, install the package using pip and import it into your Python project. The library handles model loading, image preprocessing, and inference execution, allowing you to focus on building applications rather than managing low-level details. Documentation and examples are available in the GitHub repository to help you quickly integrate vision-language capabilities into your projects.

Community Discussions

Be the first to start a conversation about MLX-VLM

Share your experience with MLX-VLM, ask questions, or help others learn from your insights.

Pricing

Open Source

Free and open source library

- Full source code access

- Local inference on Apple Silicon

- Multiple VLM support

- Python API

- Community support

Capabilities

Key Features

- Local vision language model inference

- Apple Silicon optimization via MLX framework

- Multiple VLM architecture support

- Python API

- Image understanding and visual QA

- Multimodal content generation

- Unified memory utilization

- Command-line interface