Torrix

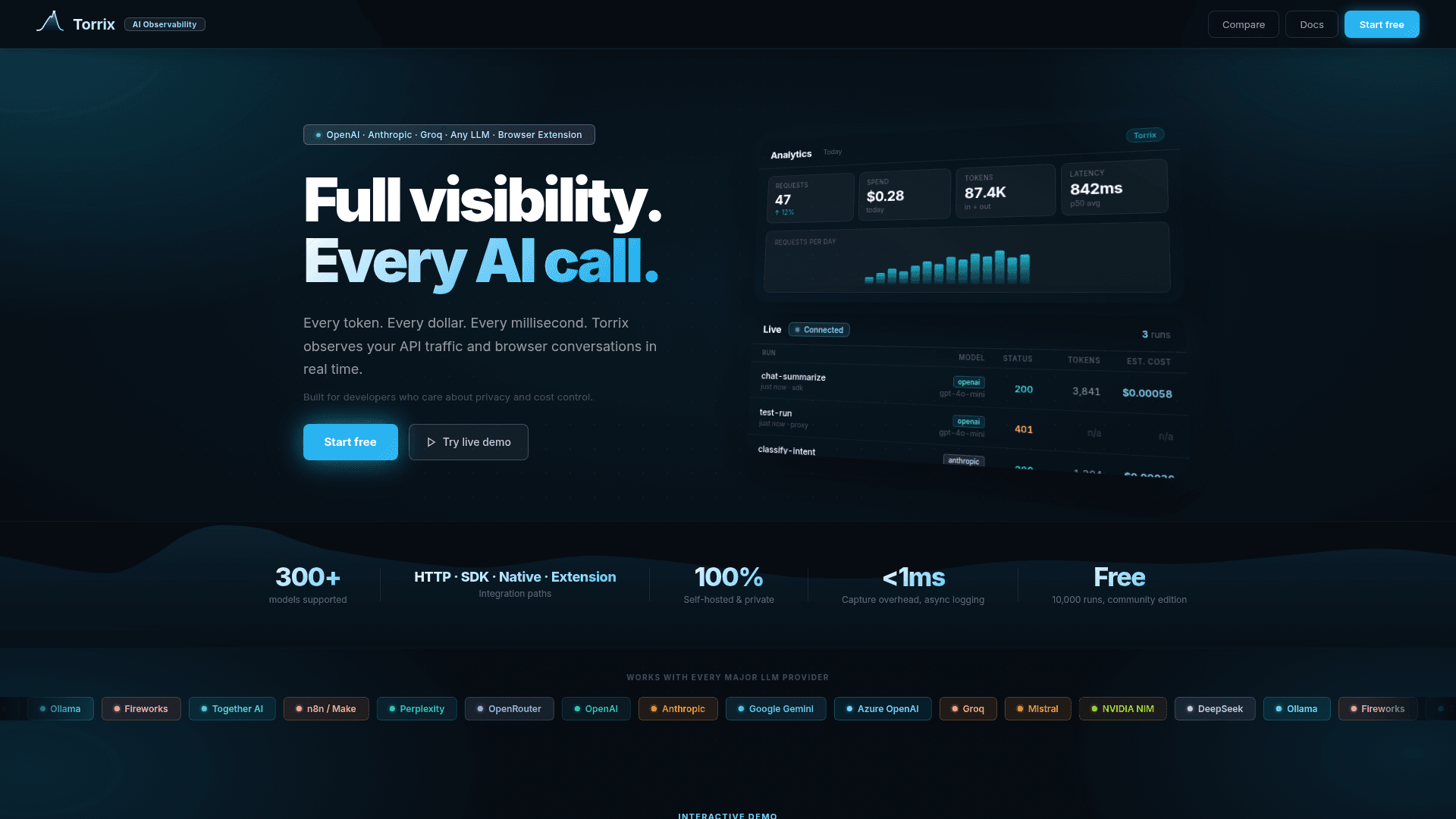

Self-hosted AI observability platform that tracks every LLM API call with real-time cost, token, and latency visibility across 300+ models and providers.

At a Glance

Self-hosted, free forever. Everything you need to observe your LLMs with your data on your server.

Engagement

Available On

Alternatives

Listed May 2026

About Torrix

Torrix is a self-hosted AI observability tool built for developers who want full visibility into their LLM API traffic without sending data to a third-party cloud. It captures every token, dollar, and millisecond from API calls and browser conversations in real time, and deploys on your own infrastructure in about 60 seconds via Docker.

What It Is

Torrix sits between your application and any LLM provider — OpenAI, Anthropic, Google Gemini, Groq, Mistral, Azure OpenAI, NVIDIA NIM, DeepSeek, Ollama, and more — and logs every request as a structured trace. The dashboard surfaces cost, token usage, latency percentiles, error rates, and full prompt/response bodies. Because it is self-hosted by default, prompts and responses never leave your server.

How It Integrates With Your Stack

Torrix offers multiple integration paths with minimal code changes:

- Python and Node.js SDKs with auto-instrumentation: call

torrix.init()once and every LLM call in your codebase is captured automatically, including streaming responses. - Go, C#/.NET, and Java SDKs for manual ingest with zero external dependencies.

- HTTP proxy for any tool that speaks HTTP — curl, n8n, Make, GitHub Copilot, SAP AI Core, or any OpenAI-compatible endpoint.

- Browser extension that intercepts ChatGPT, Claude, Gemini, Perplexity, Grok, and Mistral conversations directly, with no proxy or code changes.

- OpenTelemetry receiver at

/v1/tracesfor applications already instrumented with the OTel SDK. - MCP server built in at

/mcp, compatible with Claude Code, Cursor, Windsurf, and n8n. - n8n community node (

@torrix-ai/n8n-nodes-torrix) for native workflow integration.

Key Observability Capabilities

Torrix goes beyond basic logging with a range of analysis and control features:

- Real-time cost tracking with per-call dollar amounts and projected month-end spend based on daily velocity.

- Full prompt and response traces including finish reason, tool calls, reasoning steps (OpenAI o1/o3/o4, DeepSeek R1, Claude extended thinking, Gemini 2.5, Ollama Qwen3), and multimodal image inputs.

- Agent trace grouping via

x-torrix-traceheader, rendering multi-step agent runs as a collapsible parent-child tree. - Budget controls: soft alert webhooks and hard caps that block proxy requests once a daily limit is reached.

- PII detection and masking for emails, phone numbers, credit cards, and IP addresses before storage.

- 300+ model cost comparison showing what the same prompt would cost across alternative models, live-priced.

- Regression testing and evals: mark golden runs, replay against any model, batch-test datasets with LLM judge auto-scoring, and track pass rates.

- Grafana/Prometheus export via a

/metricsscrape endpoint. - SQL query interface for direct SELECT queries against the Torrix database.

Deployment Model and Privacy

Torrix is self-hosted by default. The homepage states that prompts and responses never touch a third-party cloud, and the capture overhead is described as under 1 millisecond with async logging. Docker deployment is the primary path. The community edition is free with no credit card required, covering up to 10,000 most recent runs and 7-day data retention. The Pro edition adds unlimited runs, 30-day retention, team management with per-project roles, model routing rules, audit log, online evals, and scheduled cost reports.

Why It Got Attention

The Torrix homepage explicitly positions the tool as a self-hosted drop-in replacement for Helicone, noting that Helicone raised its entry plan price in 2026 and was acquired by Mintlify. Torrix claims compatibility with the same proxy model and header conventions, and provides a migration guide in its GitHub docs. This positioning targets developers who want cost-controlled, privacy-preserving observability without a managed SaaS dependency.

Community Discussions

Be the first to start a conversation about Torrix

Share your experience with Torrix, ask questions, or help others learn from your insights.

Pricing

Community

Self-hosted, free forever. Everything you need to observe your LLMs with your data on your server.

- 1 user

- 7-day data retention

- 10,000 most recent runs

- Budget controls (soft alert + hard cap)

- Evals & regression testing

Pro (Founding Member)

Unlimited runs, 30-day retention, and full team management. Founding member price locks in forever.

- Everything in Community

- 30-day data retention

- Unlimited runs

- Unlimited golden runs for evals

- Up to 10 users

- Scheduled cost reports (weekly digest)

- Model routing rules

- Audit log

- Online evals (auto-score every production run)

- Priority email support

- GitHub Actions eval CLI (coming soon)

Enterprise

For regulated industries that need compliance, SSO, and dedicated support.

- Unlimited users

- 90-day retention

- SSO (SAML / Okta)

- Helm chart (Kubernetes)

- Dedicated support

Capabilities

Key Features

- Real-time cost tracking per API call

- Full prompt and response trace logging

- Token usage and latency analytics (p50/p95/p99)

- 300+ model cost comparison

- Budget controls with soft alerts and hard caps

- PII detection and masking

- Agent trace grouping and tree view

- Regression testing with golden run replay

- Batch eval datasets with LLM judge auto-scoring

- Grafana/Prometheus metrics export

- OpenTelemetry OTLP/HTTP receiver

- MCP server built-in

- Browser extension for ChatGPT, Claude, Gemini, and more

- Streaming response instrumentation

- Thinking and reasoning capture (o1, DeepSeek R1, Claude extended thinking)

- Multi-project namespaces

- Per-user cost attribution

- Model routing rules

- Audit log

- SQL query interface

- CSV and JSON export

- Prompt management and versioning

- Outbound webhooks for Slack and PagerDuty

- Cost forecasting

- Repeated prompt detection

- Multimodal trace support

- Shareable run links

- Custom run tags

- Run scoring and LLM judge

- Weekly cost digest

- API key management with scoped permissions