Transformer Lab

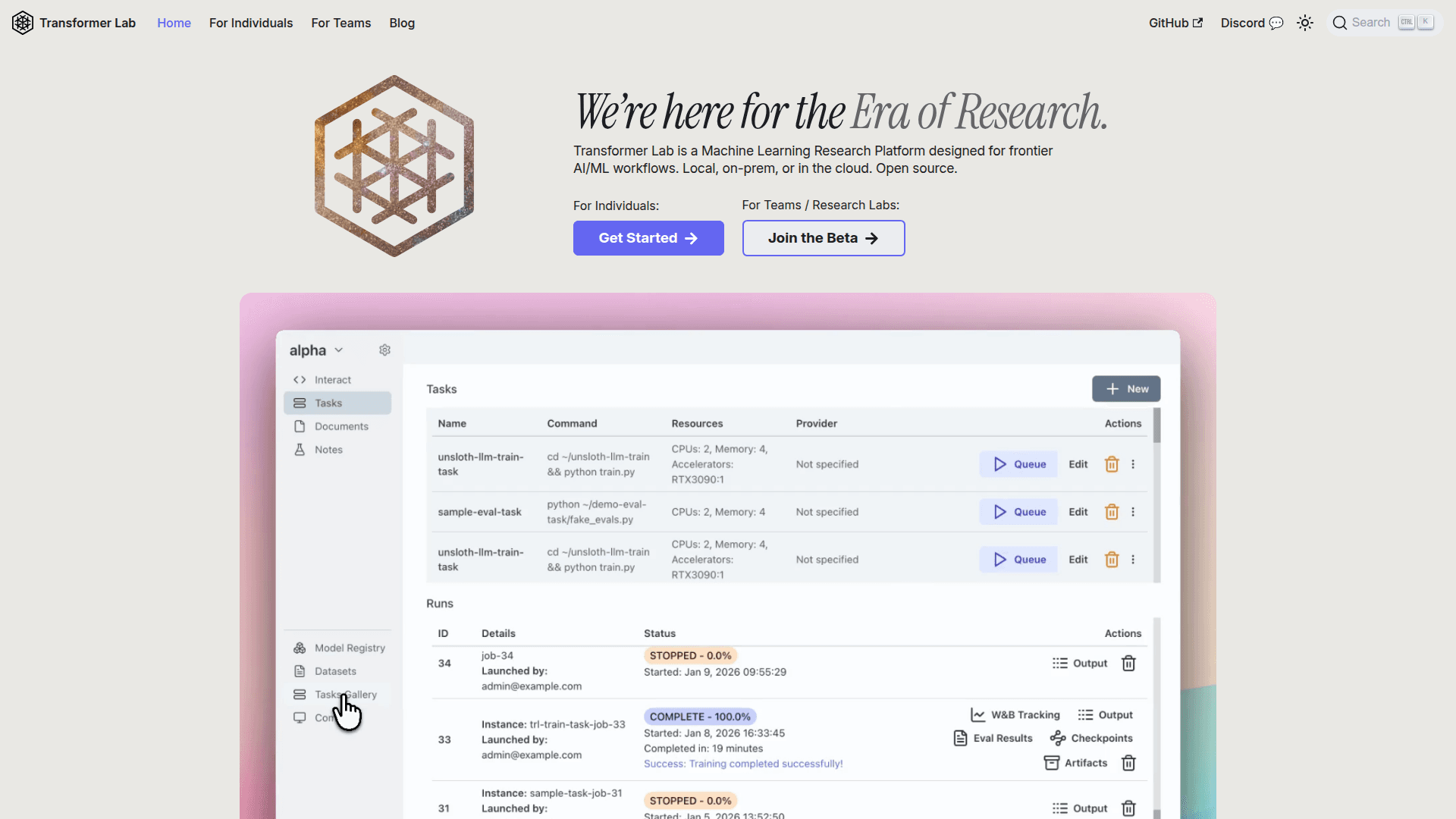

An open-source machine learning research platform for training, fine-tuning, and evaluating LLMs and multimodal models locally, on-prem, or in the cloud.

At a Glance

About Transformer Lab

Transformer Lab is an open-source machine learning research platform designed for frontier AI/ML workflows. It unifies environment management, compute coordination, and experiment tracking into a single workspace, replacing brittle bash scripts and scattered tooling. The platform supports local, on-premises, and cloud deployments, making it suitable for individual researchers and large ML teams alike.

- Distributed Training Orchestration: Run jobs locally, on-prem, or in the cloud without rewriting scripts or managing Slurm templates; scheduling and telemetry keep GPU usage visible.

- Advanced Training & Multimodal Workflows: Supports pre-training, fine-tuning, and evaluation of LLMs, Diffusion, and Audio models, with production-ready implementations of DPO, ORPO, SIMPO, and GRPO.

- Experiment Tracking: Automatically records hyperparameters, code versions, metrics, and logs for every run, enabling easy comparison and resumption of experiments.

- Artifact, Dataset & Checkpoint Management: Systematically tracks which code, dataset version, and config produced each checkpoint; synchronizes data across nodes even with ephemeral compute.

- RLHF & Preference Optimization: Complete RLHF pipeline with reward modeling handles orchestration automatically, from data processing to final model outputs.

- Comprehensive Evaluations: Run Eleuther Harness benchmarks, LLM-as-a-Judge comparisons, and objective metrics; red-team models and visualize results over time with exportable dashboards.

- Broad Integrations: Works with Weights & Biases, GitHub, SkyPilot, Slurm, Kubernetes, Ray, Hugging Face TRL, Unsloth, PyTorch, and MLX on NVIDIA, AMD, TPUs, or Apple Silicon.

- Open Source: Freely available on GitHub; get started by visiting the documentation and installing the app on your preferred compute environment.

Community Discussions

Be the first to start a conversation about Transformer Lab

Share your experience with Transformer Lab, ask questions, or help others learn from your insights.

Pricing

Open Source

Fully open-source platform for individuals and teams. Free to use, self-hosted.

- Distributed training orchestration

- Pre-training and fine-tuning

- Multimodal model support

- Experiment tracking

- Artifact and checkpoint management

Teams / Research Labs Beta

Beta plan for teams and research labs with additional collaboration and managed features.

- All open-source features

- Team collaboration

- Managed infrastructure support

Capabilities

Key Features

- Distributed training orchestration

- Pre-training and fine-tuning of LLMs

- Multimodal model support (Diffusion, Audio, TTS)

- DPO, ORPO, SIMPO, GRPO preference optimization

- RLHF pipeline with reward modeling

- Experiment tracking with hyperparameter and metric logging

- Artifact, dataset, and checkpoint management

- Eleuther Harness benchmarks

- LLM-as-a-Judge evaluations

- Red-teaming and model evaluation dashboards

- Slurm and Kubernetes integration

- Weights & Biases integration

- Apple Silicon (MLX) support

- Local, on-prem, and cloud deployment