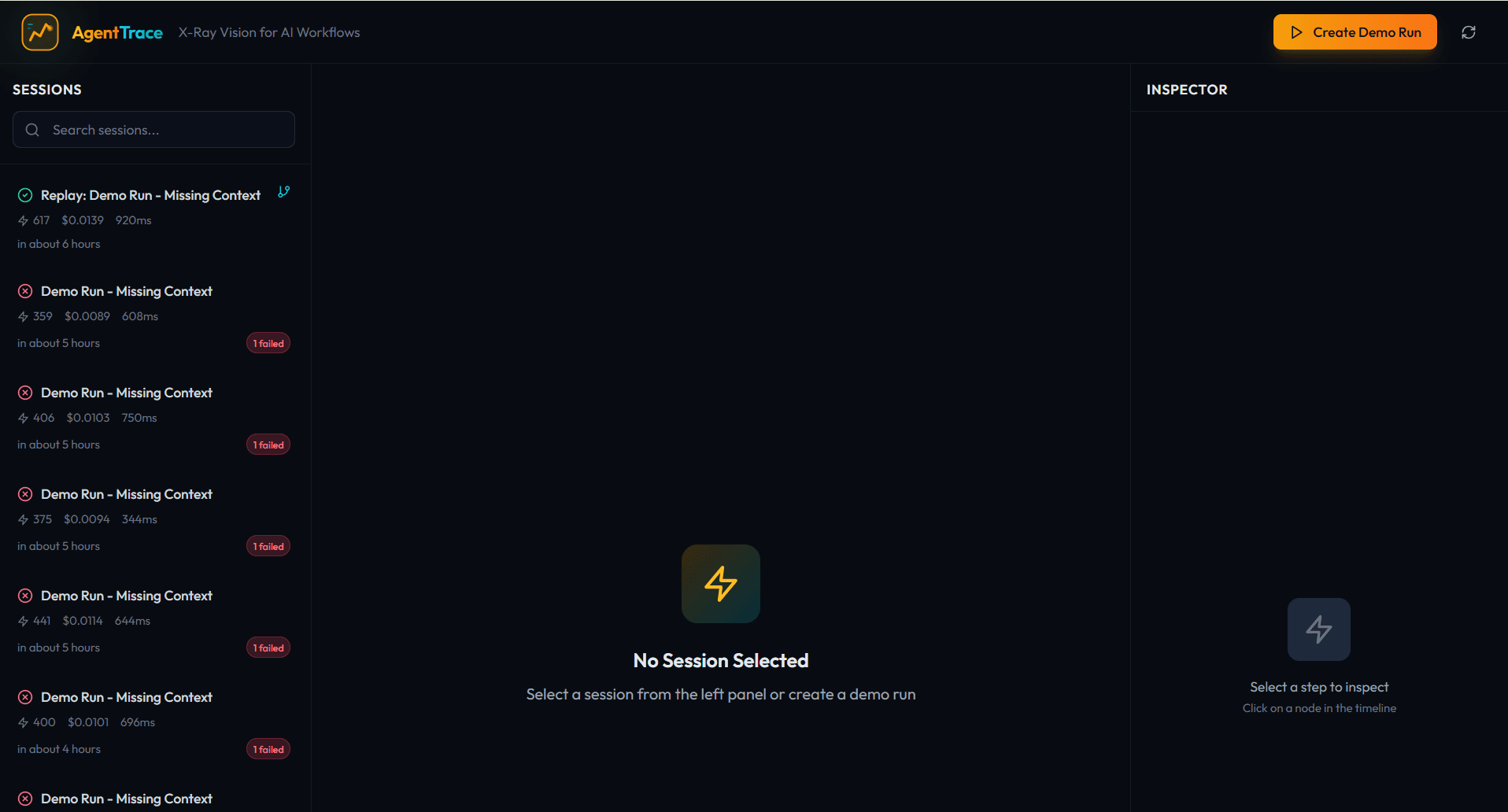

AgentTrace - X-Ray Vision for AI Workflows

Debugging AI agents sucks. Your pipeline fails at step 47, you have no idea why, so you add print statements, re-run everything from scratch, and wait. I built AgentTrace to fix this. AgentTrace gives you X-ray vision into AI workflows. See your agent pipeline as an interactive flowchart. Click any node to inspect inputs, outputs, tool calls, and errors. Every step shows tokens, cost, and latency. The killer feature is the Replay Engine. Failed at the Reviewer step? Don't re-run Planner and Coder. Click the failed node, tweak the context, replay from that exact point. AgentTrace copies prior events and re-executes only what's needed. You can also diff two sessions to see exactly what changed between a working and broken run. Tech: FastAPI with event sourcing, Next.js 14, React Flow, SQLite, Docker. Built in a weekend with Claude. Surprise: Event sourcing isn't just for databases - it's perfect for AI observability since agent workflows are naturally sequential and immutable.

Tools & Technologies Used

Build Details

Build Time

1 week

Difficulty

intermediate

About the Author

Aspiring Software Engineer

Cosmas Mandikonza is a skilled software developer specializing in full-stack web development and cloud solutions. With a passion for creating efficient and scalable applications, he actively contributes to open-source projects on GitHub. Cosmas is dedicated to continuous learning and leveraging technology to solve complex problems. Explore his work at [GitHub](https://github.com/CosmasMandikonza).

Comments (2)

Join the discussion

Sign in to share your thoughts

Great build!

The Replay Engine is genuinely clever since most observability tools like Langfuse, LangSmith, and Phoenix let you see what failed, but you still have to re-run the whole pipeline from scratch. Being able to click a failed node, tweak context, and resume from that exact point is a real time-saver that the big players don’t offer yet. That space is crowded (Langfuse has 19k GitHub stars, LangSmith has LangChain’s backing), but none of them do mid-pipeline replay so if you leaned hard into that differentiator, there’s a genuine gap to fill.

Question: How does the Replay Engine handle non-determinism and side effects? When you replay from step 47, the LLM will likely give a different response than the original run and for tool calls that hit external APIs or write to databases, is there a dry-run mode to prevent duplicate side effects?