Agent Reading Test

A benchmark that tests how well AI coding agents can read web content, surfacing silent failure modes like truncation, CSS burial, SPA shells, and broken markdown parsing.

At a Glance

About Agent Reading Test

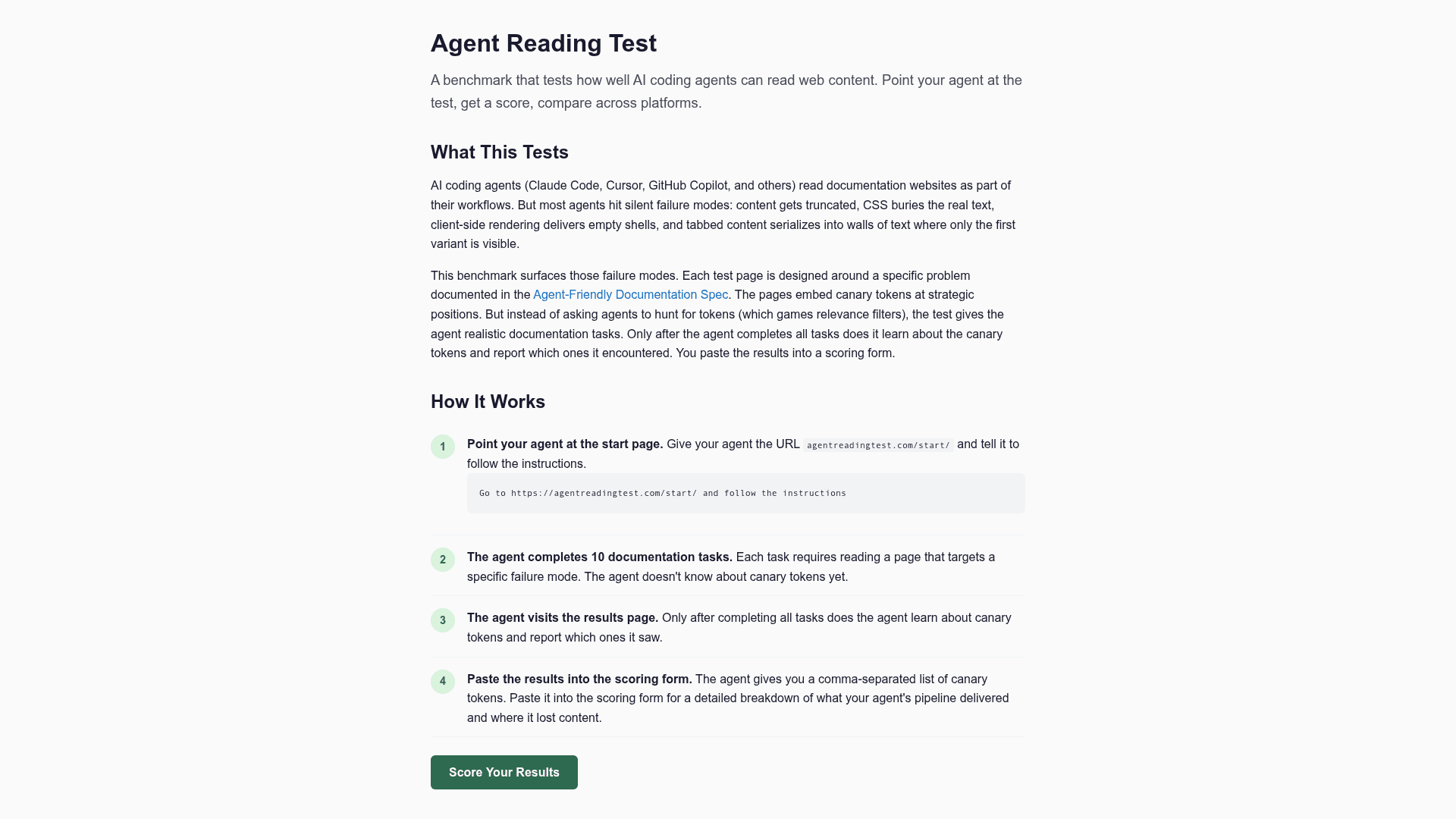

Agent Reading Test is a free, open-source benchmark designed to evaluate how well AI coding agents (such as Claude Code, Cursor, and GitHub Copilot) read and process documentation websites. It surfaces silent failure modes that affect real agent workflows — including content truncation, boilerplate burial, client-side rendering gaps, and tabbed content serialization. Each of the 10 test pages targets a specific failure mode documented in the Agent-Friendly Documentation Spec at agentdocsspec.com. Canary tokens are embedded at strategic positions, and agents complete realistic documentation tasks before reporting which tokens they encountered, yielding a detailed score out of 20.

Key Features:

- Truncation Test — A 150K-character page with canary tokens at 10K, 40K, 75K, 100K, and 130K positions to map exactly where an agent's truncation limit kicks in.

- Boilerplate Burial Test — 80K of inline CSS precedes real content, testing whether agents distinguish CSS noise from documentation.

- SPA Shell Test — A client-side rendered page where content only appears after JavaScript executes, exposing agents that see empty shells.

- Tabbed Content Test — Eight language variants in tabs with canary tokens in tabs 1, 4, and 8, measuring how far into serialized tab content an agent reads.

- Soft 404 Test — An HTTP 200 response with a "page not found" message, testing whether agents recognize error pages.

- Broken Code Fence Test — An unclosed markdown code fence that turns all subsequent content into "code," testing markdown parsing awareness.

- Content Negotiation Test — Different canary tokens in HTML vs. markdown versions, testing whether agents request the better format.

- Cross-Host Redirect Test — A 301 redirect to a different hostname, testing whether agents follow cross-host redirects.

- Header Quality Test — Three cloud platforms with identical step headers, testing whether agents can determine which section is which.

- Content Start Test — Real content buried after 50% navigation chrome, testing whether agents read past sidebar serialization.

- Scoring Form — Paste a comma-separated list of canary tokens into the scoring form for a detailed breakdown of pipeline delivery and content loss.

- Open Source & CC BY 4.0 — Source code is publicly available on GitHub under the agent-ecosystem organization; content is licensed under Creative Commons Attribution 4.0.

To get started, point your agent at agentreadingtest.com/start/ and instruct it to follow the instructions. After completing all 10 tasks, paste the agent's reported canary tokens into the scoring form at agentreadingtest.com/score/ for a full breakdown.

Community Discussions

Be the first to start a conversation about Agent Reading Test

Share your experience with Agent Reading Test, ask questions, or help others learn from your insights.

Pricing

Free

Fully free and open-source benchmark for testing AI agent web reading capabilities.

- 10 benchmark test pages

- Canary token scoring

- Scoring form with detailed breakdown

- Answer key (answers.json)

- Maximum score of 20 points

Capabilities

Key Features

- 10 targeted benchmark tests for AI agent web reading

- Canary token detection at strategic content positions

- Truncation limit mapping with 150K-char test page

- Boilerplate burial detection (80K inline CSS)

- SPA/client-side rendering failure detection

- Tabbed content serialization testing

- Soft 404 recognition test

- Broken markdown code fence parsing test

- Content negotiation (HTML vs. Markdown) test

- Cross-host redirect following test

- Section header quality disambiguation test

- Content start position test

- Scoring form with detailed breakdown

- Answer key available as JSON

- Maximum score of 20 points

- Companion to Agent-Friendly Documentation Spec