agent-skills-eval

A test runner for Agent Skills that evaluates whether your SKILL.md actually improves model performance by running evals with and without the skill loaded.

At a Glance

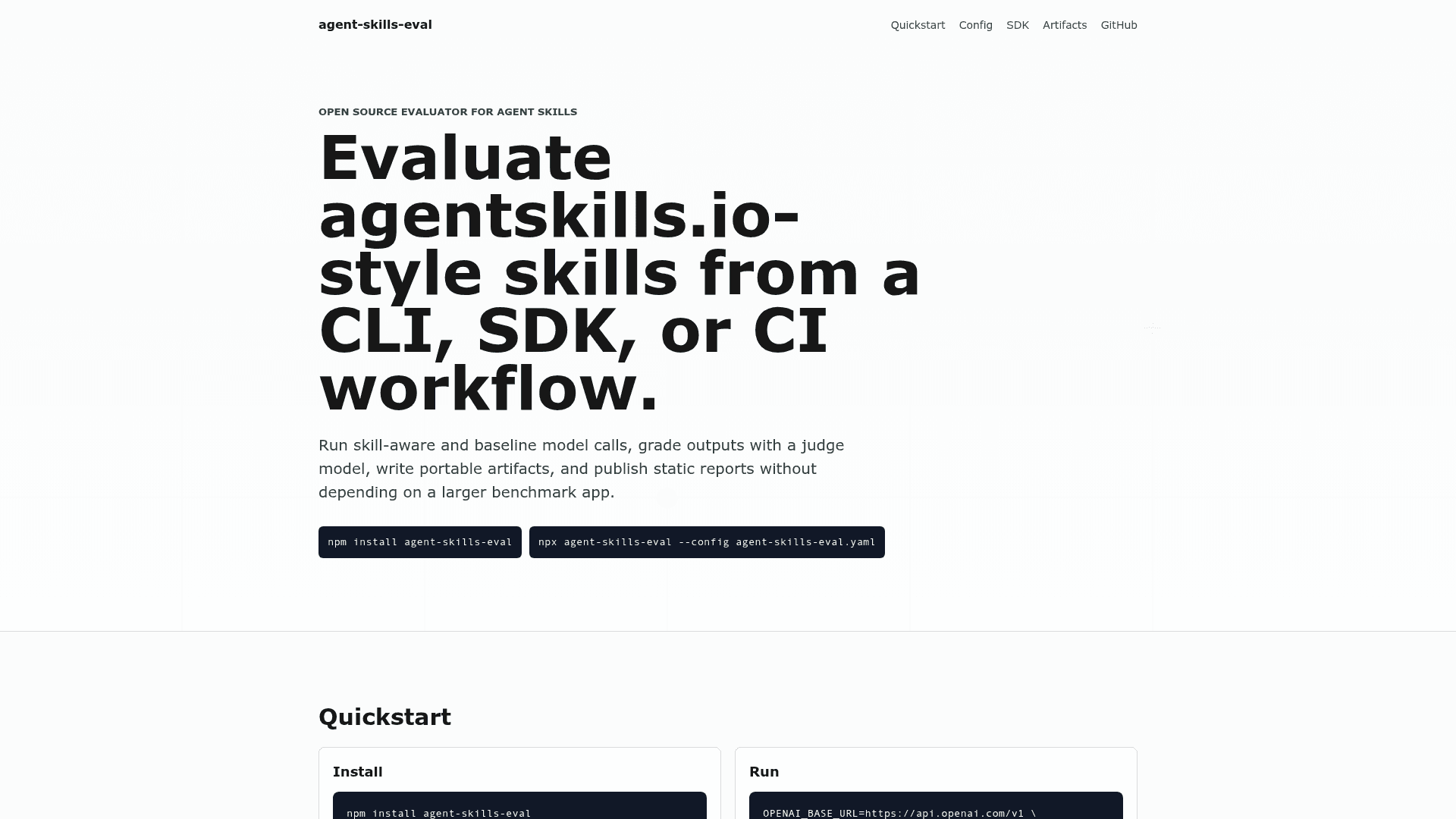

Fully free and open-source under the MIT license. Install via npm or run with npx.

Engagement

Available On

Listed May 2026

About agent-skills-eval

agent-skills-eval is an open-source TypeScript CLI and SDK that brings empirical testing to the Agent Skills ecosystem. It runs every eval twice — once with the skill loaded into context and once without — then uses a judge model to grade both outputs side by side, giving developers concrete evidence of whether a skill improves model behavior. The project is MIT-licensed and published on npm under the agent-skills-eval package name.

What It Is

agent-skills-eval is a test framework purpose-built for the agentskills.io specification, which defines a standard for giving AI agents domain knowledge via SKILL.md files. The tool fills the gap between writing a skill and knowing whether it works: it automates the with_skill vs without_skill comparison, judge-grades the outputs against declared assertions, and produces portable JSON artifacts plus a static HTML report. It is framework-agnostic and works with any OpenAI-compatible API endpoint.

How the Evaluation Loop Works

The core mental model is a controlled A/B test per eval:

- The same prompt is sent to the target model twice — once with the

SKILL.mdinjected into context, once without (baseline) - A configurable judge model scores both outputs against the eval's

expected_outputandassertions - Results are written to a structured

iteration-N/workspace withbenchmark.json, per-evalgrading.json, and timing data - A static HTML report is generated showing pass rates, assertion-level judge reasoning, full outputs side by side, and token/latency metrics

The --baseline flag enables the comparison run; omitting it produces only the with_skill result.

CLI and SDK Surface

The tool ships both a one-liner CLI and a full TypeScript SDK for programmatic use:

- CLI:

npx agent-skills-eval ./skills --target gpt-4o-mini --judge gpt-4o-mini --baseline --strict - YAML config: supports

root,workspace,concurrency,include/excludeglobs, logging format (pretty,jsonl,silent), and report output path - TypeScript SDK:

evaluateSkills()accepts typed config, streams events viaonEvent, and supportsconsoleReporter()andjsonlReporter()out of the box - Custom providers: implement a five-field

Providerinterface to connect local model servers (Ollama, vLLM, llama.cpp), proprietary APIs, or mock providers for unit tests

agentskills.io Spec Compliance

The library implements the full agentskills.io specification end to end, including strict SKILL.md YAML frontmatter validation (required name and description, lowercase-hyphenated format, parent-directory name match), evals/evals.json schema, and the official iteration-N/<eval>/<mode>/ artifact layout. Beyond the spec, the SDK adds per-eval defaults, model params, tool definitions, deterministic tool_assertions, and a flat workspaceLayout: "flat" option for multi-skill dashboards.

Platform and Compatibility

agent-skills-eval is OpenAI-compatible by default and works with OpenAI, Together, Groq, Anthropic via OpenAI-compat layers, and local Llama servers — anything that speaks the OpenAI chat API. It requires Node.js (version specified in package.json) and is distributed via npm. Artifacts are plain JSON and JSONL, making them portable and easy to diff across runs or plug into custom dashboards.

Current Status

The repository was created in May 2026 and last updated on May 11, 2026. The project had accumulated 406 stars and 16 forks shortly after launch, with CI passing on the main branch. It is actively maintained under the MIT license with full documentation hosted on GitHub Pages at darkrishabh.github.io/agent-skills-eval.

Community Discussions

Be the first to start a conversation about agent-skills-eval

Share your experience with agent-skills-eval, ask questions, or help others learn from your insights.

Pricing

Open Source

Fully free and open-source under the MIT license. Install via npm or run with npx.

- Full CLI and TypeScript SDK

- with_skill vs without_skill baseline comparison

- Judge-graded outputs

- Static HTML reports

- Portable JSON/JSONL artifacts

Capabilities

Key Features

- with_skill vs without_skill baseline comparison

- Judge-graded outputs with cited assertions

- TypeScript SDK and CLI

- OpenAI-compatible provider support

- Tool-call assertions for agent evals

- Portable JSON and JSONL artifacts

- Static HTML reports

- YAML configuration file support

- Custom provider interface

- Concurrency control

- agentskills.io spec compliance

- SKILL.md frontmatter validation

- Iteration-N artifact layout

- JSONL event streaming

- Per-eval grading.json and benchmark.json output