BitNet

Microsoft's official implementation of BitNet, enabling efficient 1-bit large language model inference on CPUs without requiring GPUs.

At a Glance

Fully free and open-source under MIT license. Clone and use without cost.

Engagement

Available On

Alternatives

Listed Mar 2026

About BitNet

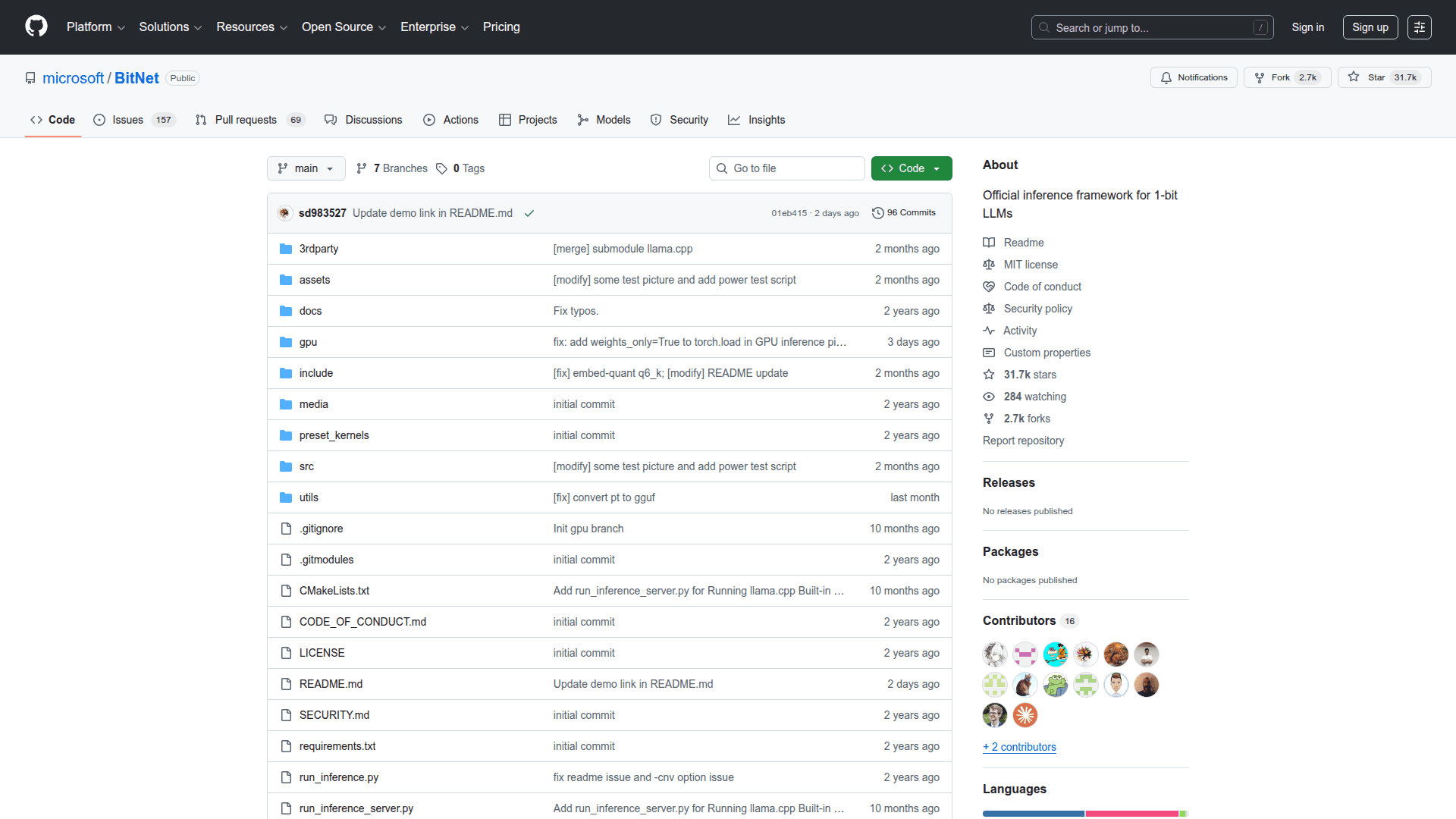

BitNet is Microsoft's open-source framework for running 1-bit large language models (LLMs) efficiently on standard CPUs. It implements the BitNet b1.58 architecture, which quantizes model weights to just 1.58 bits per parameter, dramatically reducing memory usage and computational requirements. This makes it possible to run capable LLMs on consumer hardware without expensive GPUs, opening up local inference to a much wider audience. The project is hosted on GitHub and is actively maintained by Microsoft Research.

- 1-bit LLM inference — Run quantized models with weights represented as -1, 0, or +1, enabling extreme compression without major quality loss.

- CPU-optimized kernels — Leverages hand-tuned kernels for x86 and ARM architectures to maximize throughput on commodity hardware.

- Local inference — Run models entirely on-device with no cloud dependency, preserving privacy and reducing latency.

- Open-source codebase — Clone the repository from GitHub and follow the README to build and run inference locally.

- Multiple model support — Compatible with BitNet-native pretrained models released by Microsoft Research.

- Cross-platform — Supports Windows, macOS, and Linux environments with standard build toolchains.

- Minimal dependencies — Designed to run with minimal external libraries, making setup straightforward for developers.

- Research-grade implementation — Directly corresponds to the BitNet b1.58 research paper, suitable for academic and production experimentation.

Community Discussions

Be the first to start a conversation about BitNet

Share your experience with BitNet, ask questions, or help others learn from your insights.

Pricing

Open Source

Fully free and open-source under MIT license. Clone and use without cost.

- 1-bit LLM inference on CPU

- x86 and ARM kernel support

- Cross-platform (Windows, macOS, Linux)

- Full source code access

- Community support via GitHub Issues

Capabilities

Key Features

- 1-bit LLM inference

- CPU-optimized inference kernels

- x86 and ARM architecture support

- Local on-device inference

- BitNet b1.58 model support

- Cross-platform support (Windows, macOS, Linux)

- Minimal external dependencies

- Open-source implementation