Learning Opportunities

A Claude Code and Codex plugin skill that offers evidence-based learning exercises during AI-assisted coding to help developers build genuine expertise, not just ship faster.

At a Glance

About Learning Opportunities

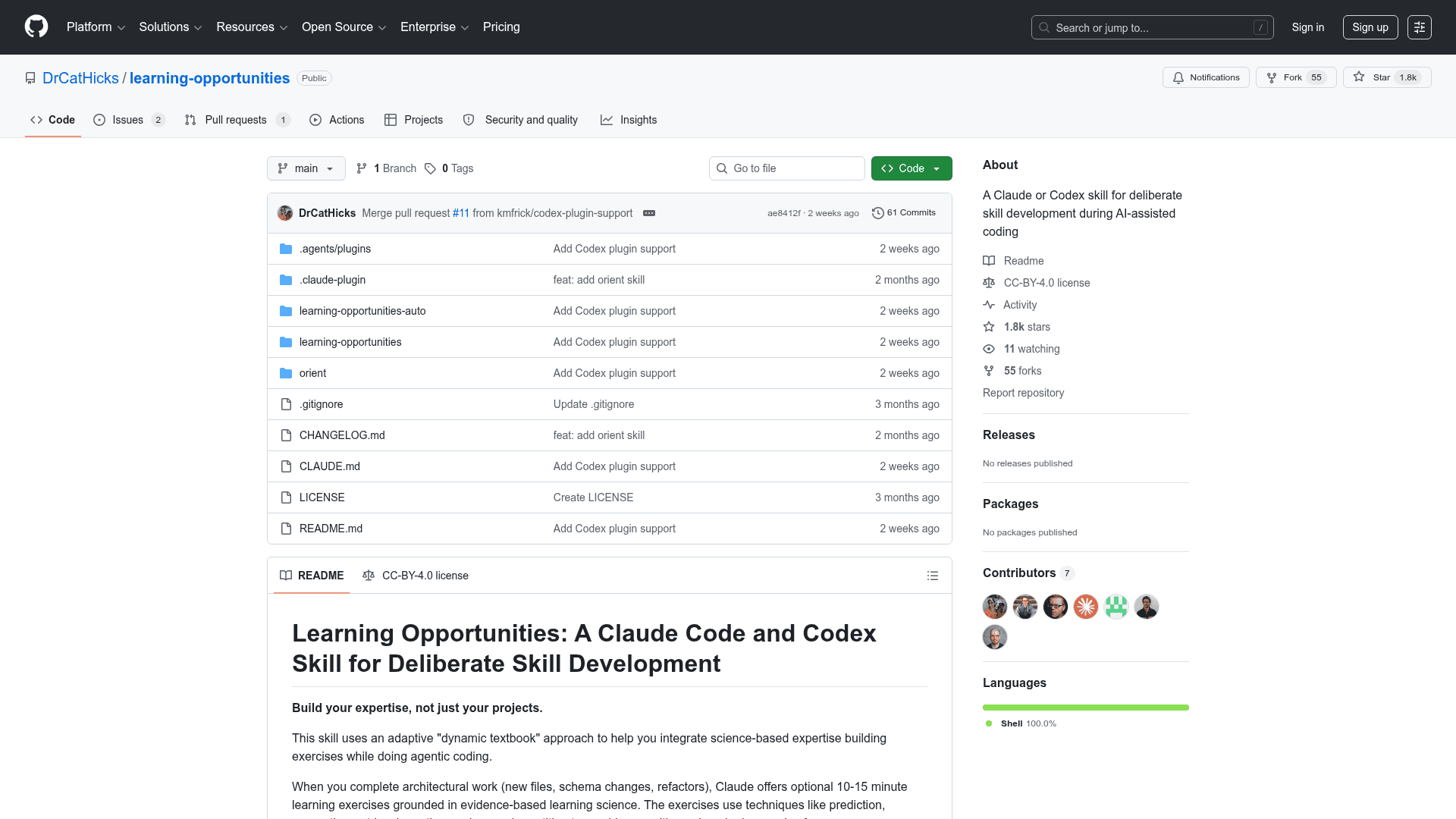

Learning Opportunities is an open-source plugin skill for Claude Code and OpenAI Codex, created by Dr. Cat Hicks, a psychological scientist who studies software teams and technology work. It uses an adaptive "dynamic textbook" approach to embed science-backed learning exercises into agentic coding workflows, targeting the specific cognitive risks that AI-assisted development can introduce. The project is licensed under Creative Commons Attribution 4.0 International and is freely available on GitHub.

What It Is

Learning Opportunities is a plugin-style skill that hooks into Claude Code or Codex sessions and, after significant architectural work (new files, schema changes, refactors, unfamiliar patterns), offers the developer an optional 10–15 minute interactive learning exercise. Rather than replacing the coding workflow, it introduces a deliberate "learning mode" grounded in cognitive and educational psychology research. The skill is designed to counteract well-documented learning risks that emerge when developers rely heavily on AI-generated code without active engagement.

The Learning Science Behind It

The skill draws on a substantial bibliography of peer-reviewed research, including work on the generation effect, fluency illusion, spacing effect, metacognition, and retrieval practice. The README identifies five specific risks that AI coding tools can introduce:

- Generation effect: Accepting AI-generated code skips the active processing that builds understanding.

- Fluency illusion: Clean generated code can feel more understood than it actually is.

- Spacing effect: Machine-velocity workflows push toward cramming without the reflection cadence that aids retention.

- Metacognition: Fast workflows leave little room to monitor one's own learning or build accurate self-models of expertise.

- Retrieval: Agentic models tend to give complete answers, reducing opportunities for self-testing that strengthens retention.

Exercise Types and Workflow

When triggered after significant work, Claude asks: "Would you like to do a quick learning exercise on [topic]? About 10–15 minutes." A key design principle is that Claude pauses and waits for the developer's input rather than answering its own questions — intentionally pushing against the model's default to provide complete answers. Exercise types include:

- Prediction → Observation → Reflection: What do you expect to happen? Now let's see. What surprised you?

- Generation → Comparison: Sketch your approach before seeing the implementation.

- Trace the path: Walk through execution step by step, predicting each transition.

- Debug this: What would go wrong here, and why?

- Teach it back: Explain a component as if onboarding a new developer.

- Retrieval check-in: At session start, what do you remember from last time?

Two suppression conditions prevent over-prompting: Claude will not offer exercises if the developer has already declined once in the session, or if two exercises have already been completed.

Installation and Ecosystem

The skill installs as a plugin marketplace entry for both Claude Code and Codex. For Claude Code, users add the marketplace via /plugin marketplace add and then install the specific plugin. For Codex, a single codex plugin marketplace add command pulls it from GitHub. The repository also ships two optional companion skills: learning-opportunities-auto, which hooks into git commits to automatically consider offering an exercise post-commit (available on Linux, macOS, and Windows with setup), and orient, which generates repo orientation lessons using strategies from empirical research on program comprehension and expert codebase navigation.

Team Measurement Playbook

The project includes MEASURE-THIS.md, a companion playbook for teams that want to run a structured experiment with the skill. It provides validated survey items from peer-reviewed research on developer thriving and AI skill threat, guidance on interpreting results, a "team boast" template for communicating findings to leadership, and Claude.md nudges for statistical rigor. The measures are released under CC-BY-SA 4.0 and link to open-access research supplements.

Current Status

According to the repository metadata, Learning Opportunities was created in early 2026 and last updated in May 2026, with 1,775 stars and 53 forks on GitHub as reported by the project's own About page. The project is actively maintained and pairs with a companion skill, Learning-Goal, which guides users through structured learning goal-setting using Mental Contrasting with Implementation Intentions (MCII).

Community Discussions

Be the first to start a conversation about Learning Opportunities

Share your experience with Learning Opportunities, ask questions, or help others learn from your insights.

Pricing

Open Source

Fully free and open-source under Creative Commons Attribution 4.0 International license. No cost to use, modify, or distribute.

- Core learning-opportunities skill

- learning-opportunities-auto post-commit hook

- orient repo orientation skill

- MEASURE-THIS.md team experiment playbook

- Full source code access

Capabilities

Key Features

- Adaptive learning exercises triggered after significant architectural work

- Evidence-based exercise types: prediction, generation, retrieval practice, spaced repetition

- Claude pauses and waits for developer input rather than answering its own questions

- Suppression conditions to prevent over-prompting (1 decline or 2 completions per session)

- Optional post-commit automatic prompting via learning-opportunities-auto

- Repo orientation lessons via the orient companion skill

- MEASURE-THIS.md team experiment playbook with validated survey items

- Customizable trigger conditions, exercise types, and session caps

- Pairs with Learning-Goal skill for structured goal-setting using MCII

- CC-BY-4.0 open-source license