Lemonade

Open-source local LLM server for Windows, Linux, and macOS that runs LLMs, image generation, speech, and more on GPUs and NPUs with an OpenAI-compatible API.

At a Glance

Fully open-source under Apache 2.0. Free to download, use, and self-host on any PC.

Engagement

Available On

Alternatives

Listed Apr 2026

About Lemonade

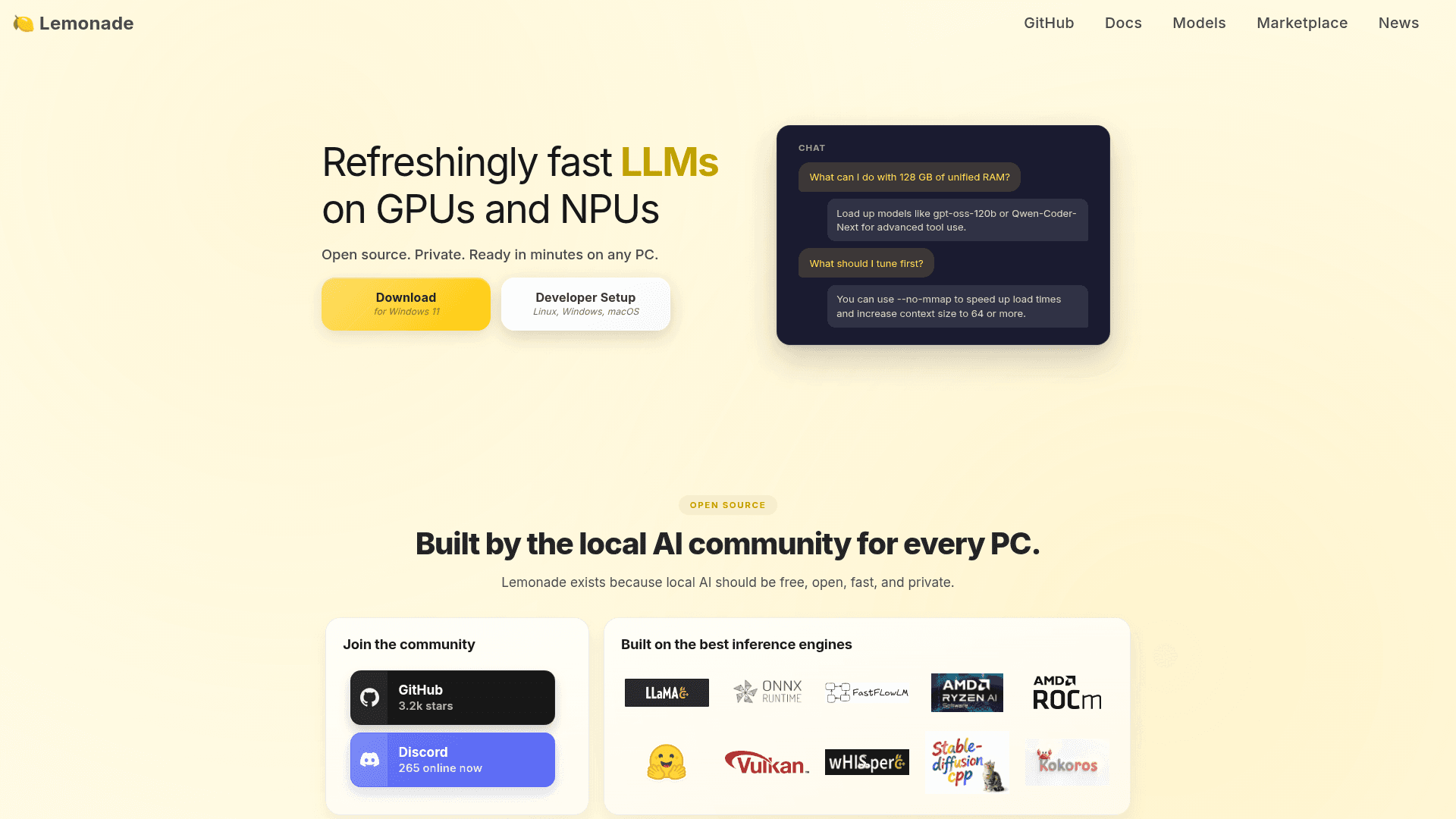

Lemonade is an open-source, privacy-first local AI server that runs LLMs, image generation, transcription, and speech synthesis on your PC's GPU or NPU. It installs in under a minute, auto-configures for your hardware, and exposes an OpenAI-compatible API so hundreds of apps work out of the box. Built on top of inference engines like llama.cpp, ONNX Runtime, FastFlowLM, and Ryzen AI SW, it supports running multiple models simultaneously across Windows, Linux, and macOS (beta).

- One-Minute Install: A simple MSI installer for Windows 11 sets up the entire stack automatically, including hardware-specific dependencies.

- OpenAI API Compatible: Point any OpenAI-compatible app at

localhost:8000and get chat, vision, image generation, transcription, and speech generation immediately. - Multi-Engine Support: Leverages llama.cpp, ONNX Runtime, FastFlowLM, Ryzen AI SW, ROCm, Vulkan, whisper.cpp, stable-diffusion.cpp, and Kokoros for broad model and hardware coverage.

- Auto-Hardware Configuration: Detects and configures GPU and NPU dependencies automatically, removing manual setup friction.

- Multiple Models at Once: Run more than one model simultaneously to support complex or multi-modal workflows.

- Built-in GUI App: A graphical interface lets you browse, download, try, and switch between models quickly without touching the command line.

- Cross-Platform: Consistent experience across Windows, Linux, and macOS (beta), with Debian packages available via PPA.

- Unified Modality API: Single local service endpoint covers chat, vision, image generation, transcription, and speech generation.

- Lightweight Native Backend: The core C++ service binary is only 2 MB, minimizing resource overhead.

- Marketplace Integrations: Works out of the box with Open WebUI, n8n, GitHub Copilot, Continue, OpenHands, Dify, and more.

Community Discussions

Be the first to start a conversation about Lemonade

Share your experience with Lemonade, ask questions, or help others learn from your insights.

Pricing

Open Source (Free)

Fully open-source under Apache 2.0. Free to download, use, and self-host on any PC.

- Local LLM inference on GPU and NPU

- OpenAI-compatible API

- Image generation

- Speech synthesis

- Audio transcription

Capabilities

Key Features

- Local LLM inference on GPU and NPU

- OpenAI-compatible REST API

- Image generation (stable-diffusion.cpp)

- Speech generation (Kokoros)

- Audio transcription (whisper.cpp)

- Multi-engine support (llama.cpp, ONNX Runtime, FastFlowLM, Ryzen AI SW, ROCm, Vulkan)

- Run multiple models simultaneously

- Auto hardware configuration

- Built-in GUI for model management

- Cross-platform: Windows, Linux, macOS (beta)

- Debian PPA packages

- Hugging Face GGUF model search and download

- NPU support via FastFlowLM and Ryzen AI SW

- 2 MB native C++ backend