LLM From Scratch

A hands-on workshop where you write every piece of a GPT training pipeline yourself, building a ~10M parameter language model that trains on a laptop in under an hour.

At a Glance

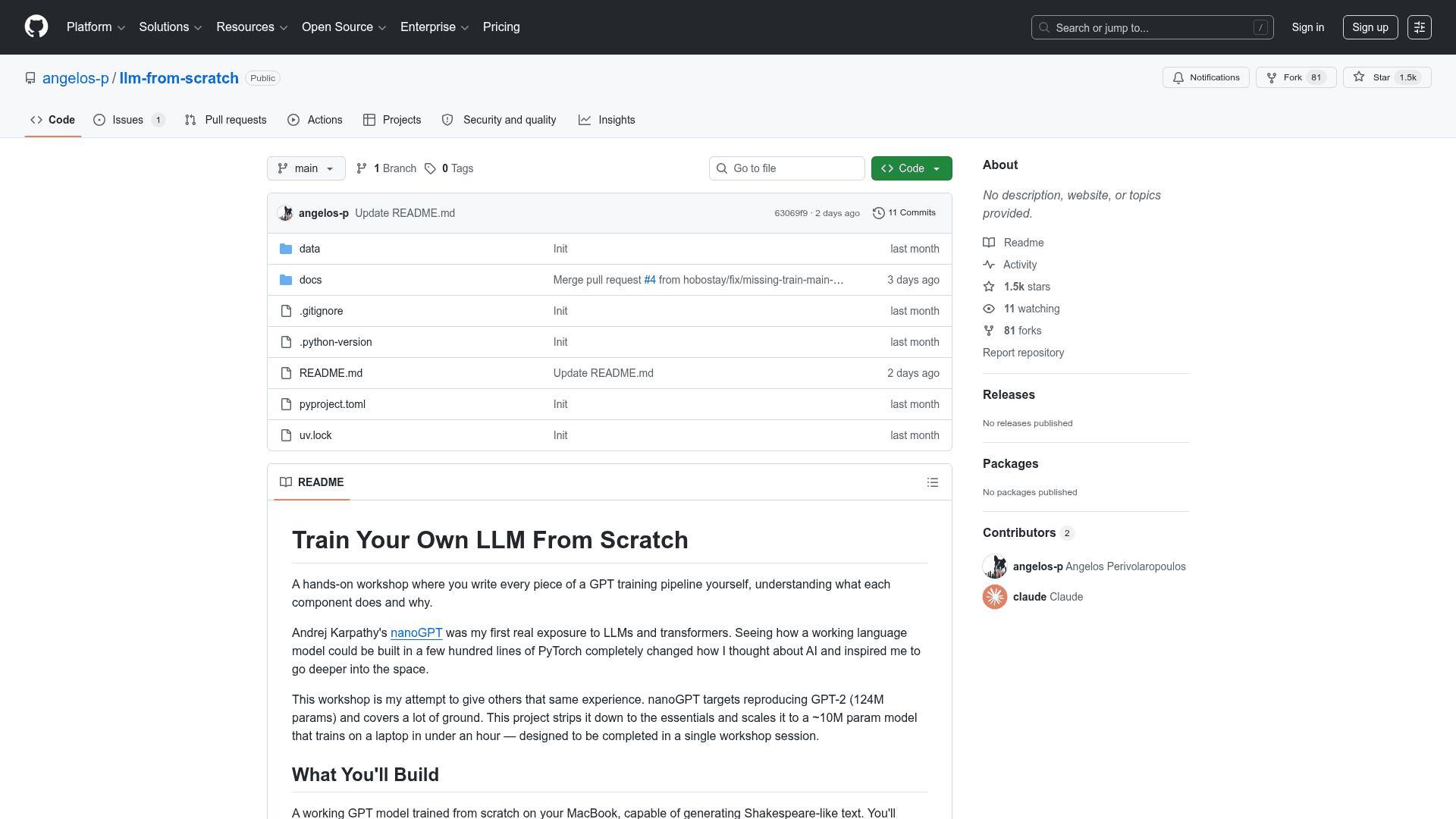

Fully free and open-source workshop available on GitHub.

Engagement

Available On

Listed May 2026

About LLM From Scratch

LLM From Scratch is a hands-on educational workshop that guides you through building a complete GPT training pipeline from the ground up using PyTorch. Inspired by Andrej Karpathy's nanoGPT, it strips the process down to essentials and scales to a ~10M parameter model that trains on a laptop in under an hour — designed to be completed in a single workshop session. You write every component yourself: tokenizer, model architecture, training loop, and text generation, gaining deep understanding of how modern language models work.

- Tokenizer implementation — Build a character-level tokenizer that converts text into token IDs the model can process, and learn why BPE fails on small datasets.

- Transformer architecture — Write the full GPT model including token embeddings, positional embeddings, multi-head self-attention, layer normalization, and MLP feed-forward blocks.

- Training loop — Implement the complete training pipeline with forward pass, cross-entropy loss, backpropagation, AdamW optimizer, gradient clipping, and learning rate scheduling.

- Text generation — Build autoregressive inference with temperature scaling and top-k sampling to generate Shakespeare-like text from your trained model.

- Multiple model configs — Choose from Tiny (~0.5M params, ~5 min), Small (~4M params, ~20 min), or Medium (~10M params, ~45 min) configurations to match your hardware and time.

- Hardware flexibility — Automatically uses Apple Silicon GPU (MPS), NVIDIA GPU (CUDA), or CPU; also runs on Google Colab for those without a local setup.

- Structured 6-part curriculum — Work through tokenization, transformer architecture, training loop, text generation, scaling experiments, and a competition to train the best AI poet.

- uv-based setup — Get started quickly with

uv syncfor dependency management, or install manually with pip for Colab environments.

Community Discussions

Be the first to start a conversation about LLM From Scratch

Share your experience with LLM From Scratch, ask questions, or help others learn from your insights.

Pricing

Open Source

Fully free and open-source workshop available on GitHub.

- Full GPT training pipeline source code

- 6-part workshop curriculum

- Shakespeare dataset

- Multiple model configurations

- Google Colab support

Capabilities

Key Features

- Character-level tokenizer implementation

- Full GPT transformer architecture from scratch

- Complete training loop with AdamW optimizer

- Autoregressive text generation with temperature and top-k sampling

- Multiple model size configurations (Tiny, Small, Medium)

- Apple Silicon (MPS), CUDA, and CPU support

- Google Colab compatibility

- 6-part structured workshop curriculum

- Shakespeare dataset included

- Learning rate scheduling and gradient clipping