LocalScore

An open benchmark tool that helps you understand how well your computer can handle local AI tasks.

At a Glance

About LocalScore

LocalScore is an open-source benchmarking tool designed to measure and compare how effectively different hardware configurations can run local AI workloads. It provides standardized performance metrics for running large language models locally, helping users understand their system's capabilities for AI inference tasks. The tool is part of the Mozilla Builders program and offers both a web interface for viewing results and a CLI for running benchmarks.

Key Features:

-

Open Benchmark System - Provides transparent, community-driven benchmarking for local AI performance across various hardware configurations including NVIDIA GPUs, Apple Silicon, and other accelerators.

-

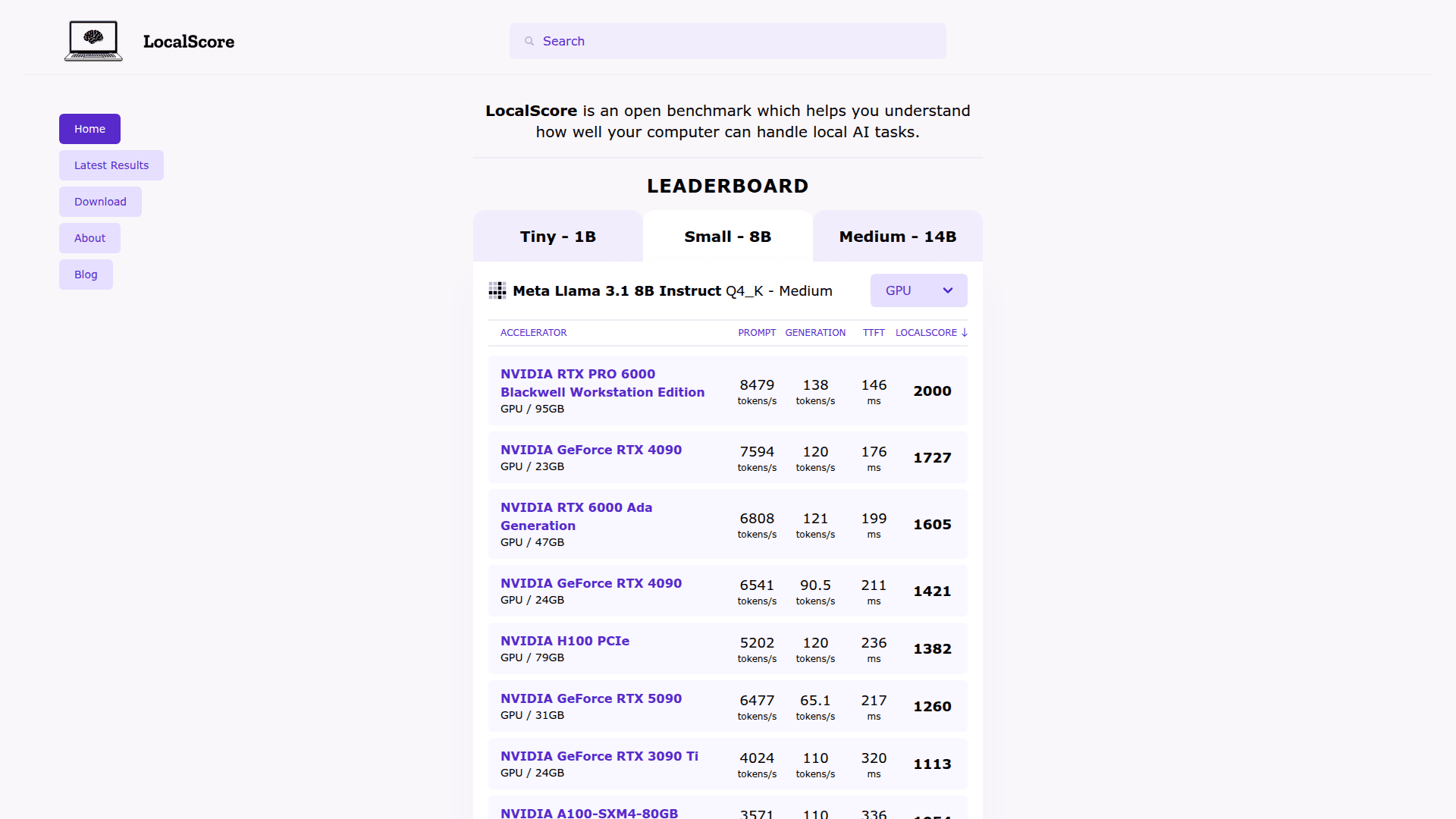

Comprehensive Performance Metrics - Measures key indicators including prompt processing speed (tokens/s), generation speed (tokens/s), time to first token (TTFT), and an overall LocalScore rating for easy comparison.

-

Hardware Comparison Database - Maintains an extensive database of benchmark results across different GPUs and accelerators, from consumer cards like RTX 4090 to enterprise hardware like NVIDIA H100 and A100.

-

Model-Specific Testing - Benchmarks performance across popular AI models including Llama 3.2, Meta Llama 3.1, and Qwen2.5 in various quantization formats.

-

CLI Tool Integration - Offers a command-line interface built on llamafile for running benchmarks on your own hardware and contributing results to the community database.

-

Cross-Platform Support - Works across different operating systems and hardware platforms, supporting both GPU and CPU-based inference testing.

To get started, visit the LocalScore website to browse existing benchmark results and compare hardware performance. Download the CLI tool from the GitHub repository to run benchmarks on your own system. Results can be submitted to the community database to help others make informed decisions about hardware for local AI workloads. The tool is particularly useful for developers and enthusiasts looking to optimize their local AI inference setup or evaluate hardware purchases for AI applications.

Community Discussions

Be the first to start a conversation about LocalScore

Share your experience with LocalScore, ask questions, or help others learn from your insights.

Pricing

Free

Completely free and open source benchmarking tool

- Access to benchmark results database

- Hardware comparison tools

- CLI tool for running benchmarks

- Community result submissions

- Open source access

Capabilities

Key Features

- Open benchmark system for local AI performance

- Prompt processing speed measurement (tokens/s)

- Generation speed measurement (tokens/s)

- Time to first token (TTFT) tracking

- Overall LocalScore rating

- Hardware comparison database

- Model-specific benchmarking

- CLI tool for running benchmarks

- Community-contributed results

- Support for multiple GPU and accelerator types