LOFT

LOFT (Long-context Frontiers) is a Google DeepMind benchmark for evaluating large language models on long-context retrieval and reasoning tasks across diverse modalities.

At a Glance

About LOFT

LOFT (Long-context Frontiers) is an open-source benchmark released by Google DeepMind to evaluate large language models (LLMs) on tasks requiring long-context understanding, retrieval, and multi-step reasoning. It covers a wide range of task types and modalities, pushing the frontier of what LLMs can do with extended context windows. The benchmark is designed to surface real-world challenges in retrieval-augmented generation, multi-hop reasoning, and in-context learning at scale.

- Long-context evaluation — Tests LLMs on tasks that require processing and reasoning over very long input contexts, up to millions of tokens.

- Multi-task coverage — Includes diverse task types such as retrieval, multi-hop QA, summarization, and more, spanning text and other modalities.

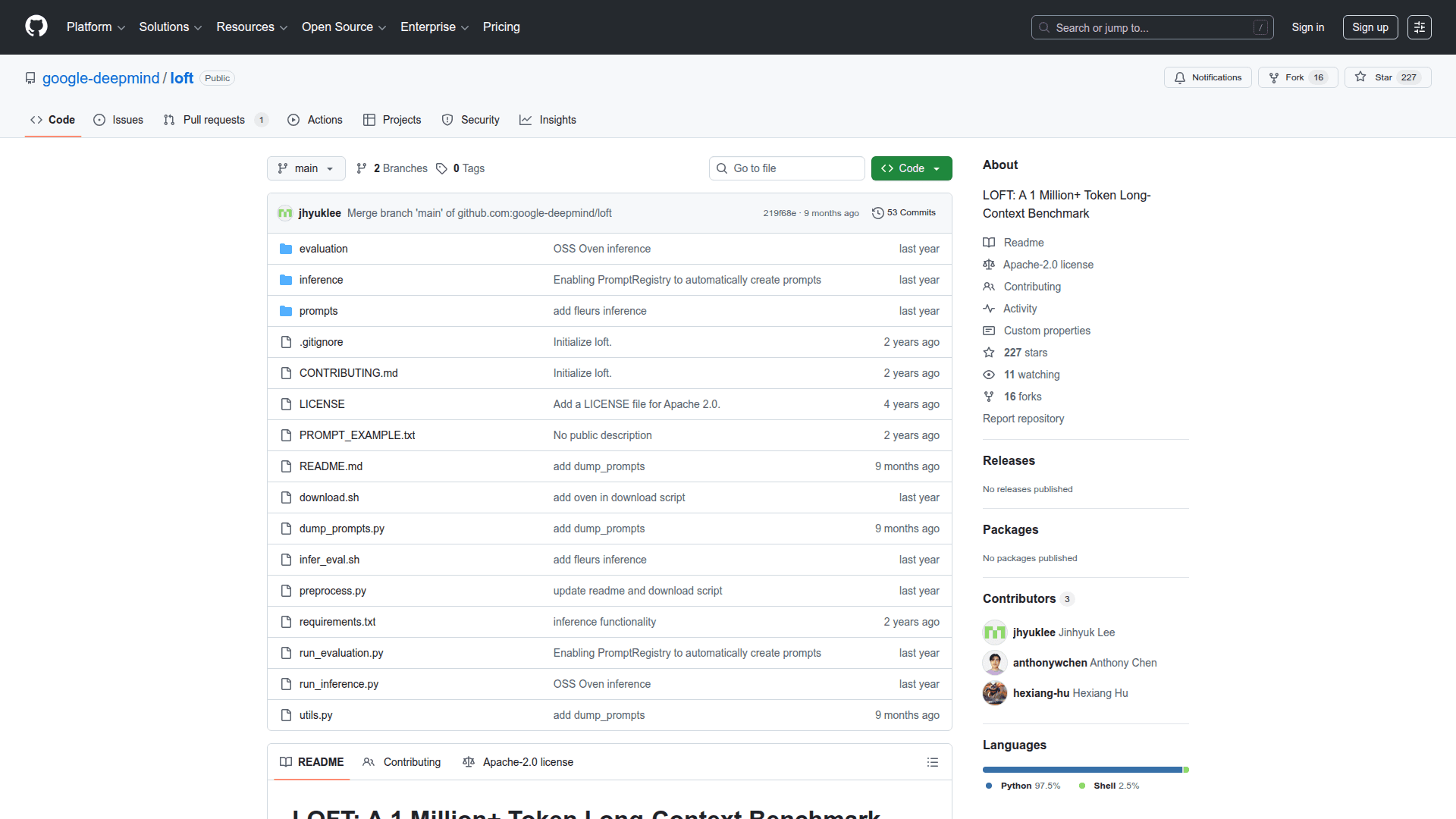

- Open-source research tool — Hosted on GitHub under Google DeepMind, making it freely accessible for researchers to reproduce, extend, and build upon.

- Benchmark suite — Provides standardized datasets and evaluation scripts so researchers can compare model performance consistently.

- RAG and in-context learning focus — Specifically designed to stress-test retrieval-augmented generation pipelines and in-context few-shot learning at long context lengths.

- Getting started — Clone the repository from GitHub, follow the setup instructions in the README, and run the provided evaluation scripts against your model of choice.

Community Discussions

Be the first to start a conversation about LOFT

Share your experience with LOFT, ask questions, or help others learn from your insights.

Pricing

Open Source

Freely available open-source benchmark for long-context LLM evaluation.

- Full benchmark suite access

- Standardized datasets

- Evaluation scripts

- Multi-task coverage

- Reproducible research

Capabilities

Key Features

- Long-context LLM evaluation

- Multi-task benchmark suite

- Retrieval and reasoning tasks

- Multi-hop question answering

- RAG pipeline stress-testing

- In-context learning evaluation

- Standardized datasets and evaluation scripts

- Open-source and reproducible