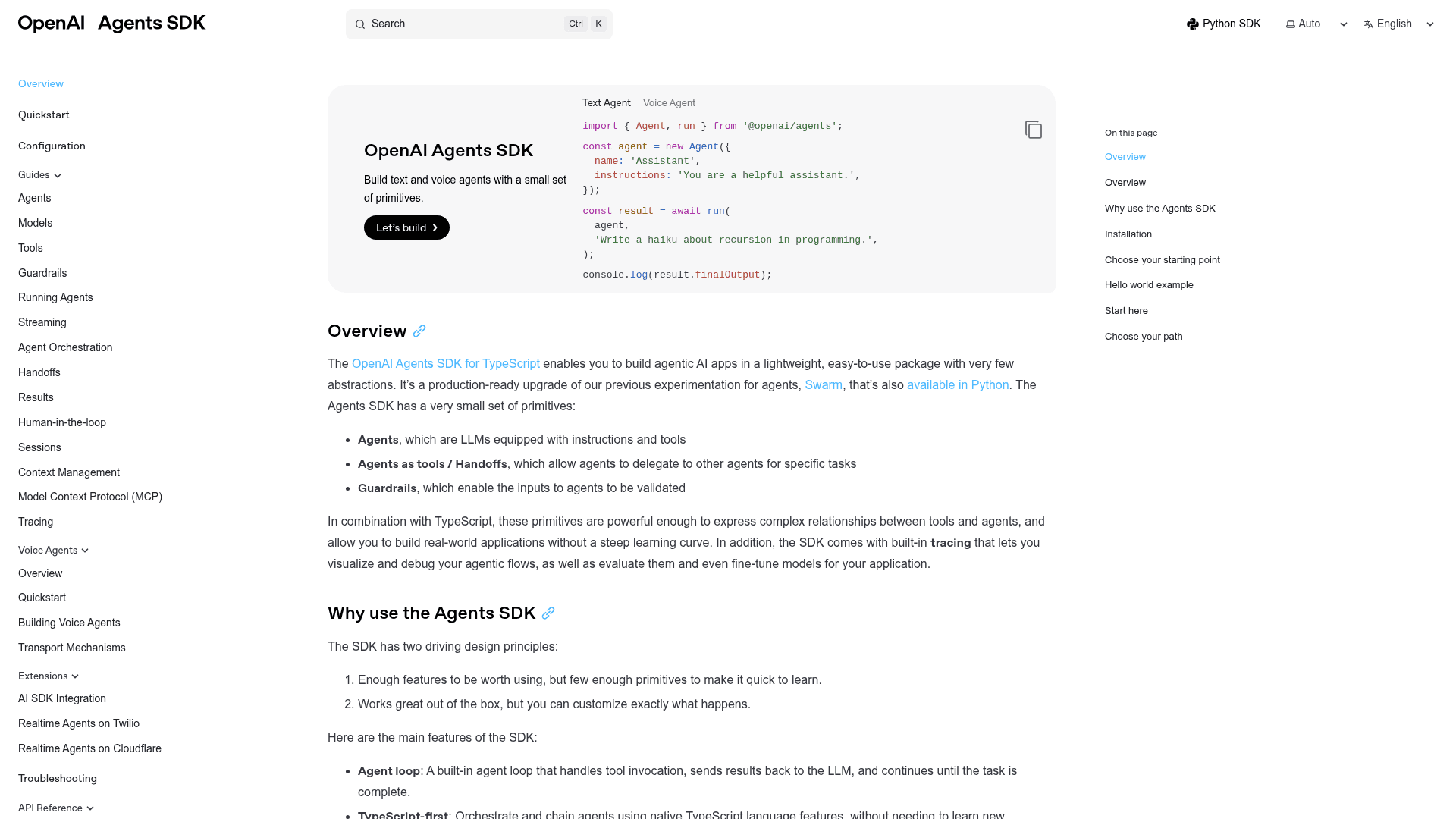

OpenAI Agents SDK

OpenAI's lightweight, provider-agnostic framework for building multi-agent and voice agent workflows in Python and TypeScript with very few abstractions.

At a Glance

About OpenAI Agents SDK

Overview

The OpenAI Agents SDK is an open-source, lightweight framework for building agentic applications. It is the production upgrade of OpenAI's earlier Swarm experiment, designed around a small set of primitives instead of heavy abstractions: Agents (LLMs configured with instructions, tools, and guardrails), Handoffs (specialized tool calls that transfer control between agents), and Guardrails (configurable input and output safety checks). A built-in agent loop runs tool calls and handoffs until a final output is produced, so you focus on agent logic rather than orchestration plumbing.

The SDK is published in two officially supported flavors that share the same primitives:

- TypeScript / JavaScript —

npm install @openai/agents(this listing's primary documentation URL) - Python —

pip install openai-agents

Despite the OpenAI name, the SDK is provider-agnostic. It works out of the box with OpenAI's Responses and Chat Completions APIs, and supports non-OpenAI models through adapters such as the Vercel AI SDK integration in the JS/TS package.

Core primitives

- Agents — declarative

Agentobjects that bundle a model, instructions, tools, guardrails, and child agent handoffs. - Handoffs — first-class transfers of control between agents, modeled as specialized tool calls. Useful for routing and specialist sub-agents.

- Guardrails — input and output validators that can trip a tripwire and halt the run with a typed error such as

InputGuardrailTripwireTriggered. - Function tools — define tools with Zod (TS) or Pydantic (Python) schemas; the SDK validates arguments and pipes results back into the agent loop.

- Sessions — built-in memory primitives like

MemorySessionandOpenAIConversationsSessionfor managing multi-turn state without rolling your own store. - Tracing — every run, tool call, and handoff is captured as spans you can view in OpenAI's tracing UI or export via custom processors for debugging and optimization.

Notable features

- Native MCP server support via

MCPServerStdio,MCPServerSSE,MCPServerStreamableHttp, and a hosted MCP tool helper, so you can expose any Model Context Protocol server as agent tools. - Realtime Agents for voice —

RealtimeAgentandRealtimeSessionwith WebRTC, WebSocket, and SIP transports. A separate browser-optimized@openai/agents-realtimepackage keeps client bundles small. - Human-in-the-loop approval points where the run pauses on a tool call until your application approves or rejects it.

- Streaming responses for tokens, tool calls, and handoff events.

- Hosted tools including

webSearchTool,fileSearchTool,codeInterpreterTool,imageGenerationTool,computerTool, andshellTool. - Structured outputs with schema-validated final results.

- Parallel agent runs and aggregation patterns for fan-out workflows.

- Configurable retries with built-in

retryPoliciesand aMaxTurnsExceededErrorsafety net viamaxTurns.

When it fits

The Agents SDK is the natural choice when your stack is OpenAI-native and you want minimal ceremony: Responses API, Realtime API, OpenAI tracing, and OpenAI hosted tools all work without adapters. It is also a strong fit for teams who liked Swarm's simplicity but need production features like guardrails, sessions, MCP, and tracing.

It is a weaker fit when you need strong multi-provider routing across many vendor APIs as a first-class concern; frameworks built around a provider-router abstraction will give you more out of the box there.

Supported runtimes

- Node.js 22+, Deno, and Bun for the JS/TS package

- Cloudflare Workers (experimental, with

nodejs_compat) - Python 3.9+ for the Python package

- Browser bundle for Realtime voice agents

License

MIT, with both packages developed in the open on GitHub by OpenAI.

Community Discussions

Be the first to start a conversation about OpenAI Agents SDK

Share your experience with OpenAI Agents SDK, ask questions, or help others learn from your insights.

Pricing

Open Source (MIT)

The OpenAI Agents SDK is free and open-source under the MIT license for both the TypeScript (@openai/agents) and Python (openai-agents) packages. You only pay for whatever model provider API calls your agents make.

- Full SDK source on GitHub under MIT license

- Unlimited agents, tools, handoffs, and runs

- Built-in tracing UI access via OpenAI account

- Realtime Voice Agents support

- Native MCP server integration

Capabilities

Key Features

- Lightweight agent primitives: Agents, Handoffs, Guardrails

- Built-in agent loop with configurable max turns

- Function tools with Zod (TypeScript) or Pydantic (Python) schema validation

- Native Model Context Protocol (MCP) server support via stdio, SSE, and streamable HTTP

- Hosted MCP tool helper for connecting to remote MCP servers

- Sessions for multi-turn memory (MemorySession, OpenAIConversationsSession)

- Human-in-the-loop tool approval with run state suspension and resumption

- Built-in tracing with spans for agents, generations, tool calls, handoffs, and guardrails

- Realtime Voice Agents with WebRTC, WebSocket, and SIP transports

- Separate browser-optimized package (@openai/agents-realtime) for client-side voice agents

- Streaming agent output and events in real time

- Structured outputs validated against a schema

- Multi-agent orchestration with first-class agent-to-agent handoffs

- Hosted tools: web search, file search, code interpreter, image generation, computer use, shell

- Provider-agnostic model layer with adapter for Vercel AI SDK and non-OpenAI models

- Configurable retry policies and typed error classes (MaxTurnsExceededError, GuardrailTripwireTriggered)

- Parallel agent execution and result aggregation patterns

- Open-source under MIT license with active OpenAI maintenance